Navigating Ethics in AI Academic Writing: A Student's Practical Guide

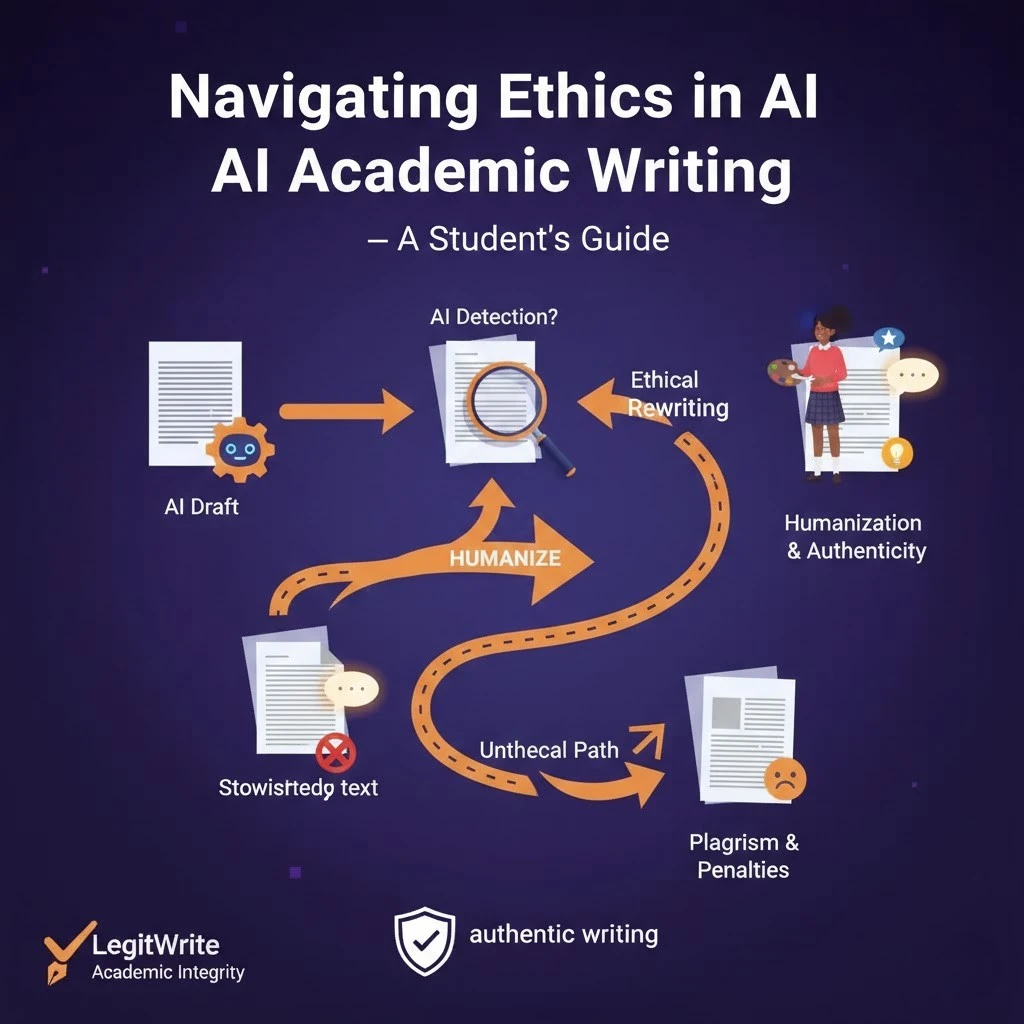

AI is now part of academic life. Some instructors welcome it, some restrict it, and many policies are still evolving. That uncertainty makes students anxious, and anxiety leads to bad decisions: hiding help, overusing tools, or avoiding tools that could be used responsibly.

Ethical AI use in school is not complicated when you reduce it to three principles:

- Ownership: you remain the author of the ideas and the argument.

- Transparency: you follow your course rules and disclose when required.

- Verifiability: you can defend every claim and every source.

This post gives you a clear way to decide what is acceptable, what is risky, and what is clearly not okay.

Start with the only rule that matters: your course policy

Every class can define "allowed assistance" differently. Before you write:

- Check the syllabus and assignment page.

- Look for policy language like "AI tools", "generative AI", "LLMs", "editing assistance", "paraphrasing tools".

- If the policy is unclear, email the instructor with a specific question (include the task and how you plan to use AI).

If your instructor says "no AI", treat that as a hard boundary. It does not matter what is common on social media. It matters what you agreed to in that class.

A simple decision tree: Green / Yellow / Red

Use this as a quick ethics filter.

Green (usually acceptable)

These uses typically do not replace your authorship:

- Brainstorming topics and research questions.

- Generating study guides from your own notes (if permitted).

- Getting feedback on clarity, grammar, and structure using your draft.

- Asking for alternative outlines, then choosing and adapting one yourself.

- Turning bullet notes into a paragraph that you heavily revise and fact-check.

Yellow (context-dependent, ask first)

These uses can be fine in some classes and forbidden in others:

- Rewriting large sections for style ("make this more academic").

- Translating full drafts between languages.

- Producing summaries of sources you did not read yourself.

- Producing code, proofs, or calculations for graded work.

- Producing citations or bibliographies (AI often fabricates details).

If you do any of these, you should assume you may need to disclose and document your process.

Red (ethically unsafe in most academic contexts)

These uses substitute the tool for your work:

- Generating a full response and submitting it with minimal edits.

- Generating analysis or interpretation you do not understand.

- Inventing sources, quotes, or data (even accidentally).

- Using AI to evade assignment intent (e.g., "write it so it won't be flagged").

If the assignment is assessing you, then outsourcing the thinking defeats the purpose.

The biggest misconception: "If I edited it, it's mine"

Editing does not create authorship. Authorship comes from:

- selecting claims,

- building an argument,

- choosing evidence,

- and making judgments about what is true and relevant.

You can ethically use AI to improve clarity. You cannot ethically use AI to replace your understanding and still present it as your own learning.

How to disclose AI use (without oversharing)

If your course requires disclosure, keep it simple and specific. The goal is to describe assistance, not to write an apology.

Example disclosure statements (adjust to your policy):

- "I used an AI tool to suggest outline options and to edit for clarity. All claims and sources were selected and verified by me."

- "I used AI to improve grammar and to propose alternative transitions. I did not use AI to generate ideas, analysis, or citations."

If the instructor provides a format, use their format.

The ethics of citations: AI is not a source

AI tools can help you find what to read, but they should not be treated as evidence unless your discipline explicitly allows citing them as tools (and you know the correct style guide approach).

Practical rules:

- Do not cite a link you did not open.

- Do not cite a study you did not read.

- Do not let AI build your bibliography without manual verification.

If you ask AI for "five sources", treat the output as a search starting point, not a reference list.

Document your process (it protects you)

You do not need a 10-page log. Keep lightweight proof that you did the work:

- an outline with your notes,

- a few timestamped drafts,

- a list of sources you actually opened,

- and a short "what changed and why" note.

If a question ever comes up, this process evidence matters more than perfect prose.

Avoiding false accusations: write with accountability

AI detection tools are imperfect, and writing can be flagged for reasons that have nothing to do with cheating (formal tone, consistent structure, lack of personal detail).

You cannot control every detector, but you can reduce risk by writing like a real student:

- Use concrete examples from lectures, labs, discussions, or readings.

- Show your reasoning steps, not just conclusions.

- Admit uncertainty when it is reasonable.

- Make your paragraphs do different jobs (some define, some argue, some test evidence).

Ethics and authenticity align here: real work looks like real work.

Ethical AI prompts (that keep you in charge)

Try prompts that force the tool into a support role:

- "Ask me five questions that would make this argument more precise."

- "Point out where my reasoning jumps too quickly."

- "Suggest counterarguments, then wait for my response before revising."

- "Rewrite this paragraph for clarity but keep my claims unchanged and do not add new facts."

When the tool is doing the questioning and you are doing the deciding, you stay the author.

When in doubt, choose the safer path

If you are unsure whether a use is allowed, choose one:

- Ask the instructor, or

- avoid the tool for that assignment, or

- use it only for "Green" tasks (clarity, brainstorming, outline options).

In 2026, policies will keep changing. The durable strategy is not to chase loopholes. It is to build a workflow that is defensible under any reasonable integrity standard: you wrote it, you understand it, and you can support it.