What Is Burstiness in AI Detection? (Plain-Language Guide)

If you have spent any time around AI detection tools, you have probably seen the word burstiness. It sounds technical, slightly vague, and often gets repeated without much explanation. People hear that detectors score burstiness, but many still do not know what that means in real writing.

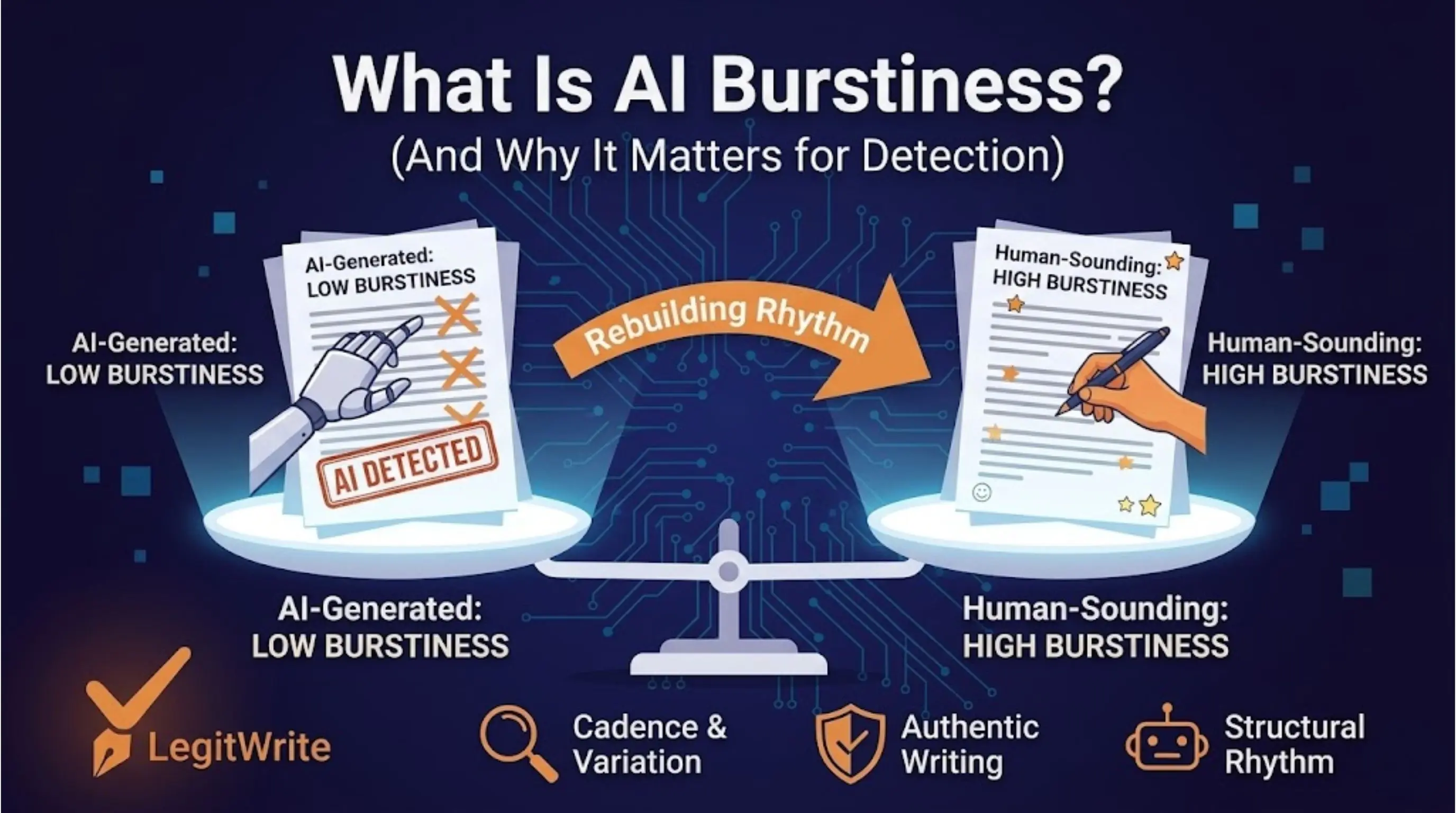

Here is the simple version: burstiness is about variation. Specifically, it refers to how much sentence length, rhythm, and structural pacing change across a passage.

Human writers usually produce writing with natural bursts. Some sentences are short and direct. Others are longer, more layered, and more analytical. AI writing often flattens that pattern. It tends to be smoother, more uniform, and more evenly paced from sentence to sentence.

That difference matters because detectors use it as one of the clues for deciding whether text looks machine-generated.

A quick definition of burstiness

Burstiness is the amount of variation in sentence shape and pacing across a text.

You can think of it this way:

- high burstiness = noticeable variation in sentence length and rhythm

- low burstiness = many sentences feel structurally similar and evenly paced

This is not just about one short sentence followed by one long sentence. It is about the broader distribution of rhythm across the whole passage.

Human writing often has uneven flow because human thought is uneven. We emphasize, digress, qualify, summarize, and pivot in ways that create a more irregular pattern.

AI writing, especially from default prompting, often sounds tidy. Too tidy.

Why detectors care about burstiness

Detectors cannot observe the writing process directly. They only see the final text. So they rely on signals that correlate with machine-generated writing.

Burstiness is useful to them because many language models produce output that is:

- statistically smooth

- rhythmically regular

- structurally repetitive

- low in surprise from sentence to sentence

That regularity is not always obvious to the casual reader, but it becomes measurable when you analyze a larger sample.

So when a detector says a passage looks AI-like, one reason may be that the variation profile is too flat.

Burstiness is not the same as quality

One of the most important misunderstandings is that low burstiness does not automatically mean bad writing.

A highly polished editor can produce very clean prose. An academic writer may deliberately keep the cadence steady. A technical writer may prioritize consistency over stylistic variation. None of those choices make the writing fake.

This matters because burstiness is a signal, not a verdict.

Detectors use burstiness because it helps them model probability. But probability is not authorship. That distinction is where many false positives begin.

How burstiness shows up in real writing

Here is a simplified comparison:

| Writing style | Burstiness profile | How it tends to feel |

|---|---|---|

| typical human draft | uneven, naturally varied | lively, slightly irregular, context-responsive |

| polished academic prose | moderate, controlled variation | formal but still human |

| AI default output | low, overly smooth | clear but rhythmically repetitive |

| paraphrased AI output | sometimes still low | wording changes, but pacing remains machine-like |

This is why swapping synonyms usually does not solve the deeper issue. The vocabulary changes, but the rhythm pattern may remain essentially the same.

Why GPTZero made burstiness famous

GPTZero helped bring burstiness into public discussion by explaining AI detection in terms non-specialists could follow. In its framing, AI-generated writing often shows:

- lower perplexity

- lower burstiness

Perplexity describes predictability of language choices. Burstiness describes variation in rhythm and sentence structure. Together, they give detectors a rough picture of whether the writing looks too machine-like.

GPTZero is not the only detector that uses this kind of logic, but it made the concept easier to talk about outside technical circles.

Turnitin and Originality.ai care about similar patterns even if they describe them differently

Different tools may not present burstiness with the same label, but they often react to related structural features.

They look for things like:

- repetitive paragraph pacing

- very regular transitions

- similar sentence lengths repeated across a passage

- clean but generic explanatory flow

So even when a detector does not explicitly show you a "burstiness score," it may still be sensitive to burstiness-related signals underneath the hood.

That is why writing that feels too even often travels badly across multiple detector platforms.

Low burstiness is common in AI writing for a reason

Language models are trained to produce highly probable next-token sequences. That tends to create outputs that are:

- coherent

- grammatically clean

- stylistically stable

- less dramatically varied than human thinking often is

This is part of why AI-generated writing can sound competent yet strangely uniform.

The model is doing what it was trained to do: generate likely continuations. But that tendency toward statistical smoothness is exactly what makes low burstiness such a useful detection signal.

Where low burstiness shows up most clearly

Certain sections of AI-generated writing are especially vulnerable:

Introductions

Introductions often use generic framing and evenly paced setup sentences. That makes them structurally repetitive.

Conclusions

Conclusions frequently compress ideas into tidy, balanced summary language. Detectors often recognize that clean pattern quickly.

Explanatory middle paragraphs

When AI explains a topic step by step, it often produces sequences of similarly shaped sentences and transitions.

These sections are not automatically AI-generated, but they are where low burstiness tends to become easiest to notice.

What high burstiness looks like without becoming chaotic

Some people hear "more variation" and assume the solution is randomness. It is not.

Healthy human burstiness looks like:

- short sentences used for emphasis

- longer sentences used when nuance is needed

- paragraph rhythm that changes with the idea

- transitions that feel natural rather than mechanically distributed

It does not mean turning a draft into an incoherent mess just to force variation.

Good human writing is not random. It is responsive.

Why paraphrasers often fail the burstiness problem

Paraphrasers focus mostly on surface-level editing:

- synonym replacement

- phrase reordering

- local sentence rewrites

That can alter vocabulary, but it does not necessarily rebuild the overall rhythm of the passage. If the underlying cadence remains flat, the detector can still see the same burstiness profile.

This is why users are often disappointed when a paraphrased draft still gets flagged. The visible wording changed, but the measurable variation did not change enough.

What this means in practice for writers

If a detector is reacting to burstiness, the useful question is not "Which words should I swap?" It is:

- where is the passage too uniform?

- which sections have overly even pacing?

- where do the transitions feel generic or repetitive?

- does the paragraph structure vary naturally?

Looking at the text this way is much more productive than chasing surface-level substitutions.

A practical checklist for checking burstiness

Before you submit or publish a draft, look for these warning signs:

| Warning sign | Why it matters |

|---|---|

| too many medium-length sentences in a row | rhythm becomes mechanically even |

| every paragraph opens the same way | transitional structure becomes predictable |

| conclusions feel generic and balanced | detector-friendly summary shape |

| explanations use repeated cadence | low structural surprise |

Then revise with structural intent:

- tighten some sentences sharply

- let others expand where necessary

- vary transition style

- break up repetitive paragraph patterns

This is not a gimmick. It is simply how natural human writing tends to work.

Burstiness and false positives

Burstiness is useful, but it is also one reason detectors can misfire on honest writers.

Writers most at risk include:

- ESL writers

- students writing formally

- technical writers

- professionals drafting in a highly standardized tone

These groups may produce lower-burstiness prose for legitimate reasons. That does not mean their writing is AI-generated. It means detectors sometimes confuse orderly human writing with machine-generated regularity.

So burstiness should help interpretation, not replace it.

Final takeaway

AI burstiness is a measure of variation in sentence rhythm and structural pacing. Detectors care about it because AI writing often stays too smooth for too long. Human writing usually contains more natural variation, even when it is polished.

That does not make burstiness a magic answer. Low burstiness is not proof of AI authorship, and high burstiness is not proof of humanity. But it is one of the clearest clues detectors use when deciding whether a passage feels machine-made.

If you want to reduce detector risk, the real goal is not cosmetic paraphrasing. It is rebuilding the rhythm of the writing so it reflects human structure rather than machine smoothness. LegitWrite's AI Humanizer Free page is the natural next step if you want to see how structural humanization tackles burstiness directly instead of just swapping words.