Why AI Humanizers Don’t Work (And What Actually Reduces AI Detection Risk)

You ran your draft through an AI humanizer.

It looked different.

But it still got flagged.

If you are searching for why AI humanizers don’t work, whether AI humanizers are detectable, or whether they can bypass Turnitin, you are not alone.

The short answer is simple.

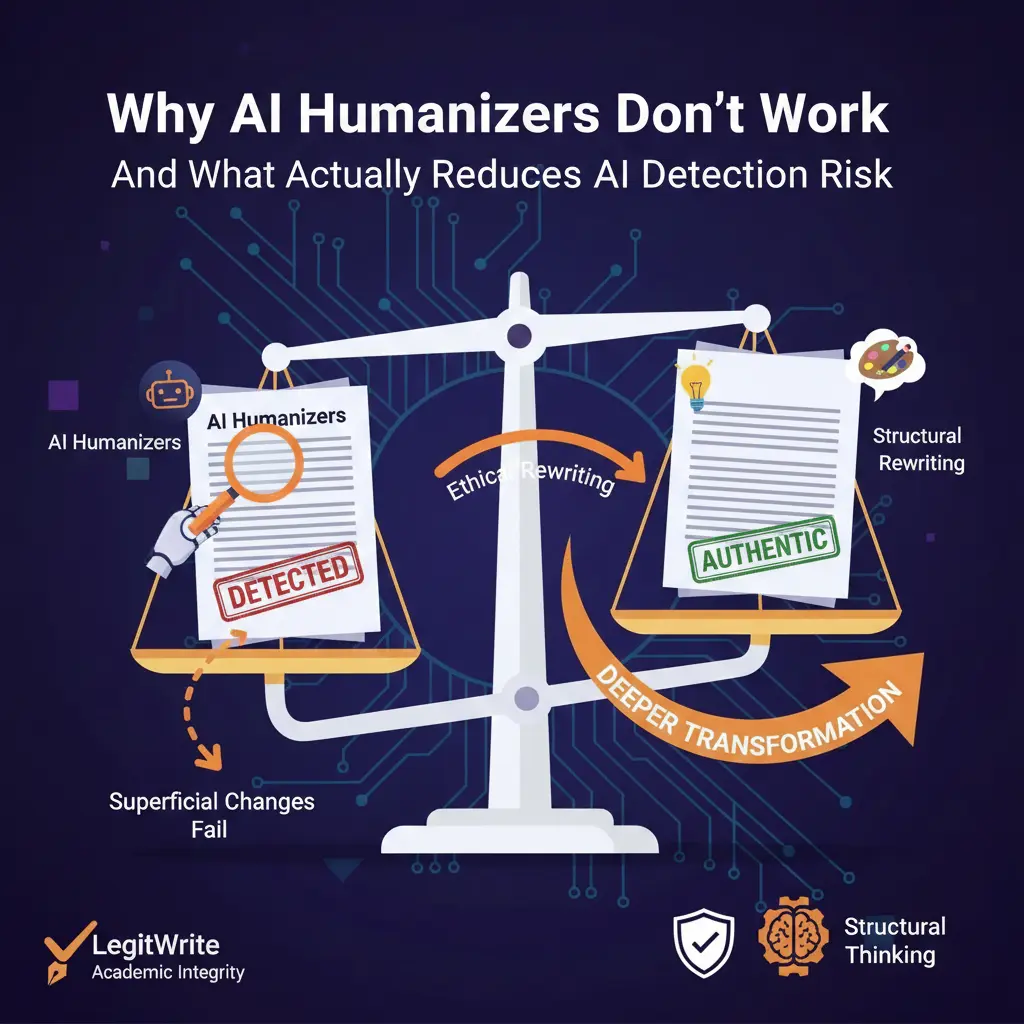

Most AI humanizers change words.

AI detectors analyze patterns.

That mismatch is why automated humanizers fail.

This guide explains:

- How AI detectors actually work

- Why paraphrasing does not meaningfully reduce AI detection

- Whether AI humanizers can bypass Turnitin

- What genuinely lowers AI detection risk

- How to improve AI generated drafts responsibly

This article is built using structured research and detection analysis insights filecite turn file and expanded into a practical framework you can apply immediately.

Do AI Humanizers Actually Work?

In most cases, no.

Many AI humanizer tools operate like advanced paraphrasers. They:

- Replace vocabulary with synonyms

- Reorder sentence fragments

- Add filler transitions

- Slightly adjust grammar

While the text appears different to a reader, the statistical structure often remains similar.

Modern AI detectors do not simply scan for obvious phrases. They evaluate:

- Predictability curves

- Token probability distribution

- Sentence length rhythm

- Structural consistency

- Semantic compression patterns

If those deeper signals remain intact, detection probability remains high.

That is why users frequently search:

- why ai humanizers don’t work

- are ai humanizers detectable

- can ai humanizers bypass turnitin

- why does ai detection still flag my text

- do ai humanizers actually work

The frustration is real. The technical explanation is measurable.

How AI Detectors Actually Work

To understand why AI humanizers fail, you must understand how AI detection models operate.

Modern detection systems use statistical modeling rather than keyword spotting. They compare your writing against probability patterns typical of large language models.

Two foundational signals matter most.

1. Perplexity (Predictability)

Perplexity measures how predictable a sequence of words is to a language model.

Human writing tends to:

- Introduce uneven phrasing

- Shift tone naturally

- Vary vocabulary unpredictably

AI generated text tends to:

- Follow high probability token paths

- Maintain smooth flow

- Avoid unexpected linguistic jumps

Lower perplexity increases AI probability scoring.

Synonym swapping rarely changes this meaningfully.

2. Burstiness (Variation)

Burstiness measures variation in sentence length and structural rhythm.

Humans naturally alternate:

- Short sentences

- Long analytical passages

- Emphasis shifts

- Occasional abrupt transitions

AI output is often smoother and more uniform.

Low burstiness contributes to machine classification.

Replacing words does not significantly increase structural burstiness.

3. Structural Probability Flow

Advanced detectors evaluate how information unfolds across paragraphs.

AI writing often:

- Presents evenly weighted arguments

- Maintains consistent logical pacing

- Avoids cognitive asymmetry

Humans emphasize unevenly. Some ideas expand. Others compress.

That imbalance is statistically natural.

Most AI humanizers do not alter this macro structure.

Why Paraphrasing Fails to Humanize AI Text

Most AI humanizers operate at the lexical layer, not the structural layer.

They adjust surface features while preserving:

- Core argument order

- Information density

- Logical transitions

- Probability distribution

From a detector’s perspective, the fingerprint remains similar.

This explains why users report:

"I humanized my essay and Turnitin still flagged it."

The rewrite altered vocabulary, not statistical identity.

Even heavily paraphrased AI text can retain low perplexity and low burstiness.

That is why one click humanization tools rarely deliver consistent detection reduction.

Can AI Humanizers Bypass Turnitin?

This is one of the most searched questions in this niche.

The honest answer:

No tool can guarantee bypass.

Turnitin and similar platforms continuously update detection models. They are trained on raw AI text and paraphrased AI text.

Some systems even attempt to distinguish:

- AI generated only

- AI generated and edited

- Mixed human AI drafts

Detection models evolve.

Automated bypass claims are unstable.

More importantly, intentionally bypassing institutional safeguards can violate academic or workplace policies.

The safer objective is not bypassing detection.

It is improving clarity, structure, and authenticity while complying with disclosure requirements.

What Actually Reduces AI Detection Risk

If synonym replacement fails, what works?

The answer is structural transformation with meaning preservation.

1. Rewrite From Understanding

Do not treat the AI draft as final.

Extract the core claims.

Then rebuild the explanation in your own reasoning flow.

Change:

- Framing

- Emphasis

- Examples

- Narrative direction

When argument structure changes, probability patterns change.

2. Alter Information Hierarchy

AI often distributes ideas evenly.

Human writing is uneven.

Expand certain sections deeply. Compress others sharply.

Vary paragraph length intentionally.

This shifts token distribution and increases burstiness.

3. Introduce Original Insight

Add:

- Real examples

- Hypothetical scenarios

- Commentary

- Questions

- Clarifications

Original thought increases unpredictability naturally.

It also improves quality.

4. Adjust Rhythm

Humans interrupt themselves.

They emphasize differently.

They sometimes use very short sentences.

Like this.

Then follow with longer analytical ones.

That rhythm shift increases burstiness significantly compared to uniform AI flow.

5. Validate With Detection Tools

After rewriting structurally, run your draft through a reliable AI detection system.

If sections still show high probability, focus rewriting effort there.

Repeat until the draft reflects authentic human variation.

Are AI Humanizers a Scam?

Not necessarily.

Some tools improve readability.

Some reduce awkward phrasing.

Some help smooth grammar.

The problem is expectation.

If you expect a one click undetectable AI text converter, you will likely be disappointed.

If you use AI for drafting and apply deliberate human structural editing afterward, results improve dramatically.

Ethical Considerations

It is critical to distinguish between:

- Refining AI assisted drafts for clarity

- Intentionally deceiving academic or compliance systems

The first is legitimate editing.

The second may violate policies.

Always follow disclosure requirements of your institution or employer.

Responsible use protects long term credibility.

Practical Framework: A Responsible Humanization Checklist

If you want a practical system, follow this workflow:

- Generate draft with AI

- Extract outline and key claims

- Rebuild structure manually

- Inject original insight

- Vary rhythm and paragraph size

- Validate with detection tool

- Refine flagged sections structurally

This approach focuses on quality improvement, not mechanical bypass.

Frequently Asked Questions

Why does AI detection still flag my humanized text?

Because your rewrite likely preserved statistical structure. Detectors analyze probability flow and rhythm, not just vocabulary.

Are AI humanizers detectable?

Yes. Most automated paraphrasers leave recognizable statistical patterns.

What is the best AI humanizer?

The most reliable approach combines AI drafting with meaningful human structural editing rather than relying on automated synonym replacement.

Does adding mistakes help avoid detection?

Artificially inserting errors usually lowers quality and may create new detectable patterns. It is not a reliable strategy.

Can AI humanizers beat GPTZero?

Short term reductions may occur with heavy structural rewriting, but no tool can guarantee long term bypass as detection models evolve.

Internal Resources

If you are refining AI generated drafts, you may find these resources helpful:

- /ai-humanizer

- /ai-to-human-text-converter

- /bypass-ai-detectors

- /remove-ai-detection

- /html-sitemap

These tools and guides focus on structural improvement rather than superficial rewriting.

Final Verdict

Why AI humanizers don’t work comes down to one core issue.

They modify words.

Detectors analyze structure.

If you want to reduce AI detection risk responsibly, focus on rebuilding argument flow, injecting original insight, and rewriting from comprehension rather than substitution.

AI can assist drafting.

Human intention must shape the final output.

That difference changes the statistical fingerprint of a text.