How Does AI Detection Work? Perplexity, Burstiness, and What Detectors Actually Score

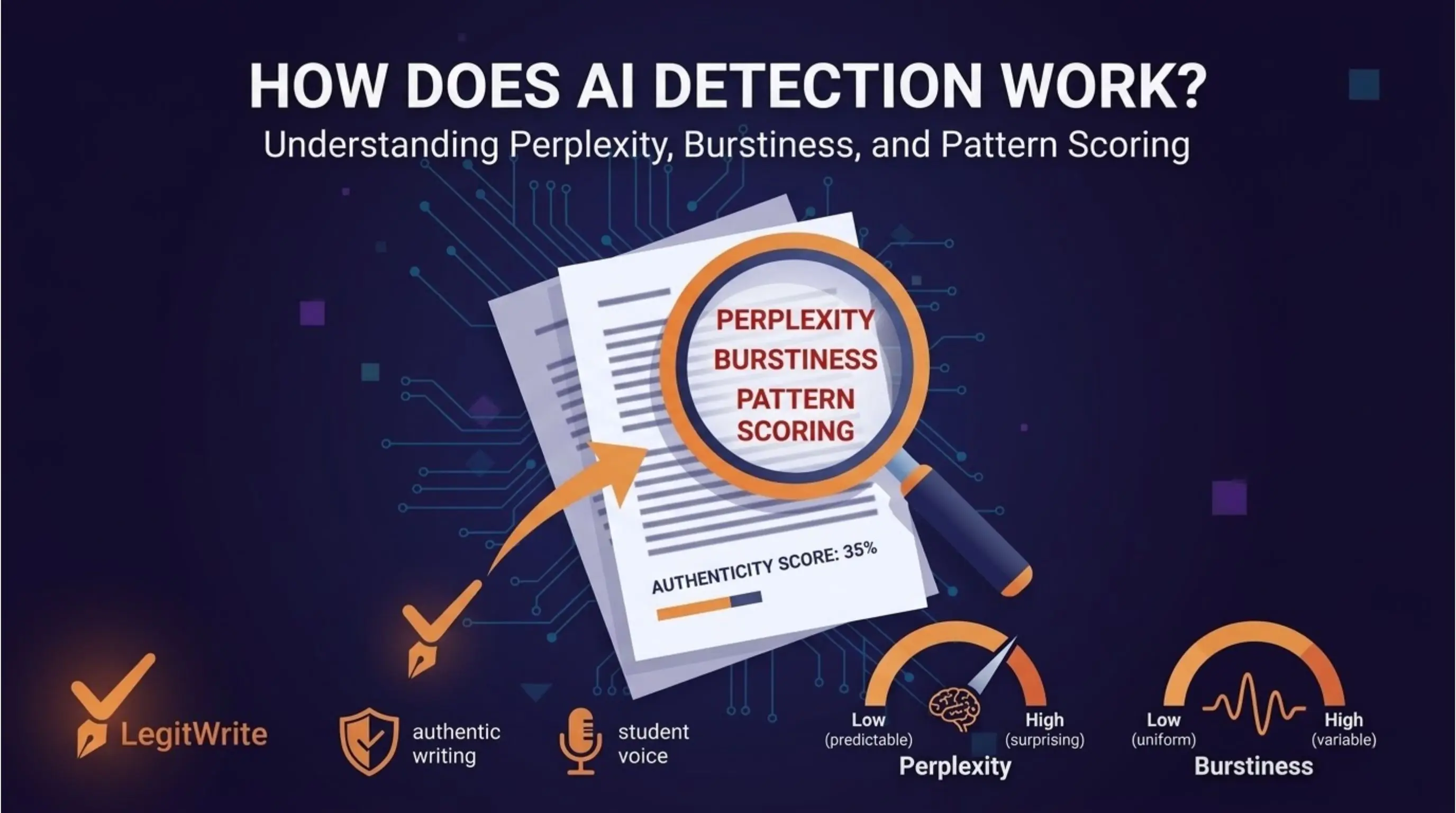

Most people who use AI detectors — or worry about them — have no idea what they actually measure. The common assumption is that detectors recognise AI "style" or flag specific phrases. Neither is accurate.

AI detectors are statistical tools. They measure mathematical properties of text and compare those properties against what AI-generated text typically looks like. Understanding those properties doesn't just satisfy curiosity — it tells you exactly what puts your writing at risk and what doesn't.

The Core Problem Detectors Are Solving

When a large language model like GPT-4 generates text, it works by predicting the most probable next word given everything that came before it. It doesn't think. It doesn't have opinions. It selects from a probability distribution at every step, weighted toward high-confidence, fluent output.

Human writers don't work this way. We make surprising choices. We start sentences in unexpected directions. We use words we half-remember from a book we read years ago. We repeat ourselves accidentally and then correct it. We write in ways that reflect genuine uncertainty, genuine knowledge, and genuine personality.

The statistical gap between these two processes is what AI detectors measure.

What Is Perplexity?

Perplexity is the most important concept in AI detection and the least well explained.

In technical terms, perplexity measures how "surprised" a language model is by a piece of text. Low perplexity means the text was highly predictable — every word choice was the obvious, high-probability option. High perplexity means the text surprised the model — word choices deviated from what was statistically expected.

AI-generated text has characteristically low perplexity because AI models are optimised to produce fluent, predictable output. They select high-probability words by design.

Human text has characteristically higher perplexity because humans make unexpected choices — unconventional phrasing, domain-specific jargon, personal references, emotional language, deliberate stylistic decisions that deviate from the statistical norm.

When a detector scans your text, it runs it through a language model and measures the average perplexity across the document. A very low score suggests AI generation. A higher, more variable score suggests human authorship.

The practical implication: writing that is extremely clean, simple, and predictable will score low on perplexity even if a human wrote every word. This is one of the primary causes of false positives.

What Is Burstiness?

Burstiness measures variation in sentence complexity across a document.

Human writers naturally produce bursty text — long, complex sentences followed by short ones. Paragraphs that run for six lines followed by a single punchy sentence. Rhythm that varies because it reflects actual thought, which is itself variable.

AI-generated text tends to be low burstiness — sentences are more uniform in length and complexity. The model produces consistently fluent output without the natural spikes and dips that characterise human writing. Everything is about the same level of complexity, because the model is optimised for consistent quality.

Detectors measure burstiness by analysing sentence length distribution, syntactic complexity variation, and rhythm patterns across a document. A document with low variance in these measures is more likely to be flagged.

The practical implication: if you write in a very consistent, uniform style — even as a human — your burstiness score will be low, pushing your overall detection score higher. This is why some highly trained writers, who have developed a consistent professional style, get false positives more often than less polished writers.

What Else Do Detectors Measure?

Beyond perplexity and burstiness, modern detectors use several additional signals:

Token probability distribution. Instead of just measuring average perplexity, sophisticated detectors look at the distribution of word-level probability scores across the document. AI text tends to cluster in a narrow high-probability band. Human text shows a wider spread with more outlier choices.

Repetition and phrase patterns. Certain transitional phrases — "It is worth noting," "Furthermore," "In today's rapidly evolving landscape," "Delve into" — appear at statistically anomalous rates in AI-generated content. Detectors build libraries of these patterns and weight them in their scoring.

Structural regularity. AI models tend to produce text with very regular structural patterns — paragraphs of similar length, consistent argument structure, uniform spacing of evidence and analysis. Human writing shows more structural irregularity.

Vocabulary distribution. Humans tend to use a narrower vocabulary range in any given document — we have favourite words, habitual phrases, idiosyncratic word choices. AI models draw from a broader, more even vocabulary distribution.

Semantic coherence patterns. How ideas connect across sentences and paragraphs follows different statistical patterns in AI versus human writing. AI transitions tend to be logically clean but semantically thin.

How Different Detectors Weight These Signals

Not all detectors use the same approach or weighting:

GPTZero was one of the first detectors and popularised the perplexity and burstiness framework. It analyses both average perplexity and perplexity variance across sentences, producing a score that reflects both overall predictability and structural variation.

Turnitin uses a proprietary model trained specifically on academic writing. It's calibrated to reduce false positives in academic contexts, which means it sets a higher threshold before flagging — but it's also specifically trained on the kinds of AI-generated academic essays students actually submit.

Originality.ai is calibrated for professional content marketing contexts and is more aggressive than most detectors. It's designed for publishers who want to catch AI content before it reaches their audience, where the cost of a false negative (missing AI content) is considered higher than the cost of a false positive.

Copyleaks combines AI detection with plagiarism detection and uses a neural network approach that looks at semantic patterns rather than purely statistical ones.

Winston AI focuses on document-level analysis with high-confidence thresholds, producing fewer but more reliable flags.

The key insight is that the same piece of text can score very differently on different detectors because each tool weights these signals differently and is calibrated for a different use case.

Why Detection Is Hard — and Getting Harder

AI detection faces a fundamental arms race problem. As detectors improve, AI models improve. As AI models are fine-tuned to produce more varied, less predictable output, detection becomes harder. As detection becomes harder, detectors add more sophisticated signals.

There are also fundamental theoretical limits. Since AI models are trained on human writing, the statistical distributions overlap significantly. A human writing in a simple, clear style will always produce text that resembles AI output. An AI prompted to write with deliberate variation and imperfection will always produce text that resembles human output.

This means no detector currently achieves — or is likely to achieve — perfect accuracy. False positives and false negatives are not bugs. They're inherent features of a probabilistic system working at the boundary of two overlapping distributions.

What This Means for Your Writing

Understanding the mechanics of detection has practical implications whether you're a student, a content creator, or a professional writer:

Low perplexity is the primary risk factor. If your writing is very clean, very simple, and very predictable, it will score high on AI detection regardless of whether you used AI. The solution isn't to write worse — it's to write with more genuine specificity, more personal voice, more unexpected choices.

Uniform sentence length is a reliable signal. Read your draft out loud. If every sentence feels roughly the same length and weight, vary them deliberately. This isn't gaming the detector — it's better writing.

Transition phrases are a specific vulnerability. If your writing is full of "Furthermore," "In addition," "It is important to note," replace them with transitions that are specific to your actual argument. "This matters because..." is better than "Furthermore..." in every way.

Check before you submit. Tools like LegitWrite's AI Detector show you your score and highlight the specific sections driving it. Running your own content before submission gives you the chance to revise on your terms.

No score is absolute. Every detector is probabilistic. A high score is a flag for human review, not a verdict. Understanding this helps you respond to a false positive with evidence rather than panic.

Summary: What Detectors Actually Measure

| Signal | What it measures | AI pattern | Human pattern |

|---|---|---|---|

| Perplexity | How predictable word choices are | Low (predictable) | Higher (varied) |

| Burstiness | How much sentence complexity varies | Low (uniform) | Higher (variable) |

| Phrase patterns | Frequency of AI-associated transitions | High frequency | Lower frequency |

| Structural regularity | Consistency of paragraph and argument structure | High regularity | More irregular |

| Vocabulary distribution | Range and evenness of word choice | Broad and even | Narrower, idiosyncratic |

AI detection is not magic and it is not infallible. It is a statistical measurement system with known strengths, known weaknesses, and known failure modes. The writers who navigate it best are the ones who understand what it's actually looking at.

Muhammad Awais is a writer and blogger covering AI tools, detection technology, and content authenticity. Follow on Medium.

Want to see exactly how your writing scores — and which sections are driving the result? Try LegitWrite's free AI Detector — no signup required.