Does QuillBot Bypass AI Detection?

QuillBot is one of the most familiar rewriting tools on the internet. Students use it, marketers use it, and content teams use it whenever they want cleaner phrasing or a quick paraphrase. That popularity leads to an obvious question: can QuillBot also help bypass AI detection?

The honest answer is: sometimes it lowers the score a little, but it is not built to solve the real detection problem.

QuillBot is primarily a paraphrasing tool. AI detectors are primarily structural pattern detectors. Those two things overlap only partially. If you understand the difference, it becomes much easier to predict when QuillBot will help, when it will disappoint, and why some users keep getting flagged after using it.

Short answer: QuillBot can change wording, not the full AI fingerprint

Here is the practical summary.

| Question | Answer |

|---|---|

| Can QuillBot rewrite AI text? | Yes, at the sentence level and phrase level |

| Can it reduce some detector scores? | Sometimes, especially on weaker detectors |

| Is it reliable against Turnitin, Originality.ai, or strong GPTZero checks? | Not consistently |

| Why not? | It changes wording more than structure |

So if your use case is improving fluency, QuillBot can help. If your use case is removing the deeper AI signature from a document, it is usually not enough on its own.

What QuillBot actually does well

To be fair, QuillBot solves real problems.

It is useful for:

- rephrasing awkward sentences

- improving readability

- shortening or expanding some passages

- helping non-native English writers polish wording

- creating alternate phrasings quickly

Those are legitimate editing tasks. If you have a human-written draft that is clunky or repetitive, QuillBot can speed up cleanup work.

The confusion starts when users assume that paraphrasing for readability is the same as rewriting for detector resistance. It is not.

Why AI detectors still catch QuillBot-rewritten text

Modern AI detectors do not rely on identifying exact phrases like "in conclusion" or "it is important to note." They analyze deeper signals such as:

- how predictable the sentence flow is

- how regular the paragraph structure is

- how much sentence length varies

- how transitional logic repeats across the document

QuillBot often preserves these signals because it typically keeps the same:

- core sentence intent

- paragraph order

- rhetorical progression

- overall rhythm

That means the text may look different but still behave statistically like AI writing.

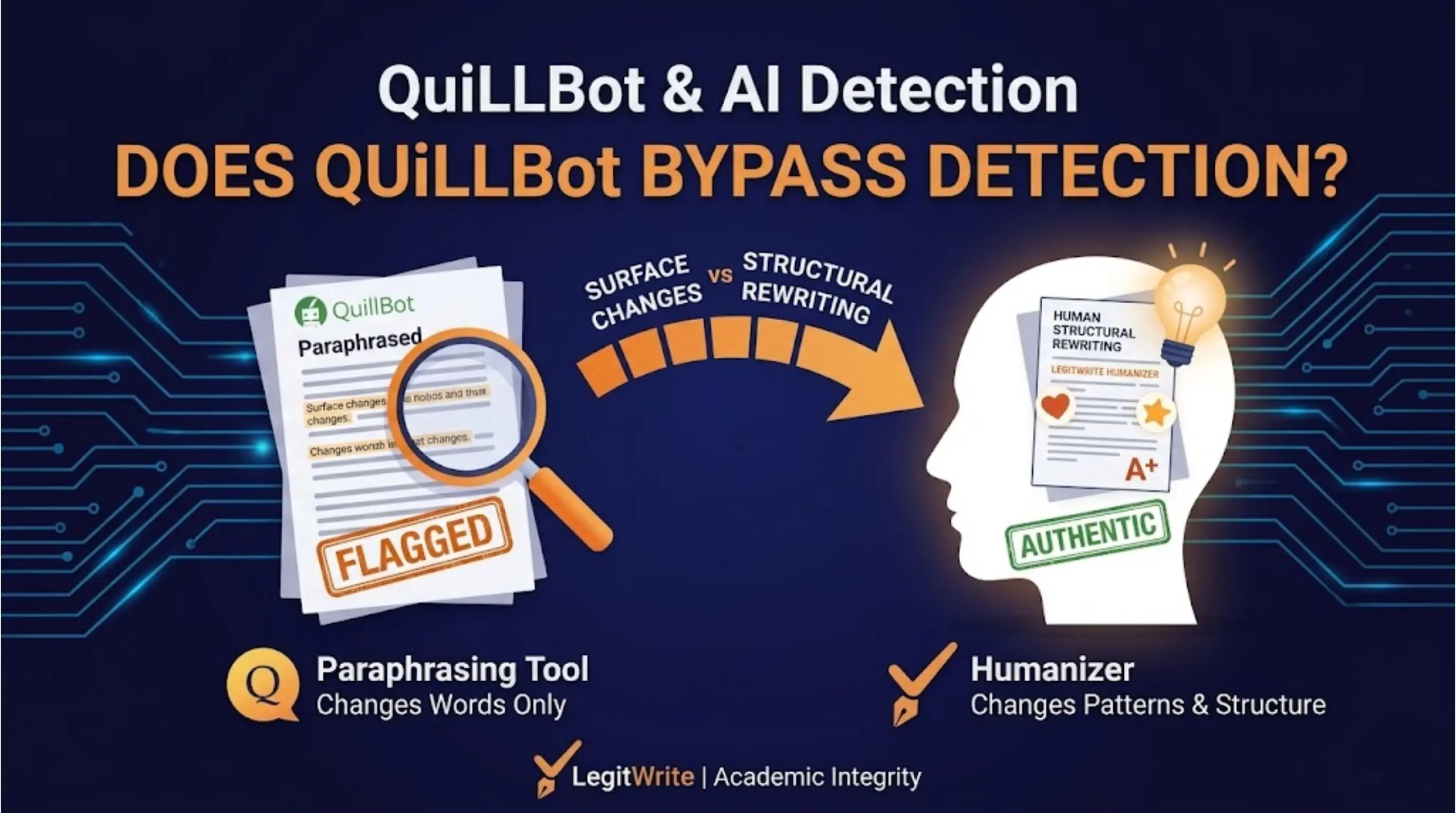

Surface paraphrasing versus structural rewriting

This is the most important distinction in the whole conversation.

Surface paraphrasing

This is what QuillBot is mainly designed to do:

- replace words

- reorder clauses

- slightly alter sentence form

- preserve the same paragraph architecture

Structural rewriting

This is what detector-aware humanization requires:

- vary sentence length intentionally

- change paragraph rhythm

- alter transitions and information flow

- rebuild high-risk sections like intros and conclusions

A detector score drops meaningfully when the underlying fingerprint changes. Surface paraphrasing often leaves that fingerprint mostly intact.

When QuillBot might appear to work

There are cases where users think QuillBot "bypassed" a detector:

1. The detector was weak to begin with

Some free detectors are inconsistent. A text might pass because the detector is unstable, not because the rewrite was strong.

2. The original text was only lightly AI-like

If the draft already had some human revision, even small paraphrases might push it below a low-confidence threshold.

3. The user rewrote more than they realize

Sometimes people say "QuillBot fixed it," when what really happened is:

- they manually edited the draft first

- QuillBot handled only part of the passage

- the final result included meaningful human changes outside the tool

In those cases, QuillBot was part of the workflow, but not the full reason the score dropped.

When QuillBot usually fails

QuillBot tends to struggle when:

- the draft is raw ChatGPT or Claude output

- the introduction and conclusion are untouched

- the whole essay has the same clean academic rhythm

- the detector is Turnitin, Originality.ai, or a strong GPTZero configuration

- citations or claims need to remain exact

That last point matters. Aggressive paraphrasing can also create a separate problem: it may distort dates, soften claims, or awkwardly rewrite technical wording you actually wanted to preserve.

What students need to watch out for

Students are especially vulnerable to the "QuillBot will fix it" myth because it feels low-risk and familiar.

But if a paper began as AI output, QuillBot can create a dangerous middle ground:

- the writing still carries machine-like structure

- the phrasing becomes slightly stranger or less precise

- the student feels falsely reassured because the words changed

That combination is worse than either a fully manual rewrite or a detector-aware structural revision workflow.

What content teams should watch out for

For marketers and publishers, QuillBot has a different limitation. It may produce readable copy, but readability alone does not solve editorial AI policies.

Content teams reviewing AI-assisted drafts need confidence around:

- detector scores

- meaning preservation

- consistency with brand voice

- citation and fact stability

QuillBot is useful for phrase-level cleanup. It is not a full QA layer for high-stakes AI content workflows.

A better workflow than "paste into QuillBot and hope"

If the goal is to reduce AI detection risk, a stronger workflow looks like this:

- Identify the paragraphs that carry the strongest AI signal

- Preserve the claims, citations, facts, and intended meaning

- Rebuild sentence rhythm and paragraph pacing

- Rewrite the introduction and conclusion manually or structurally

- Recheck the result against the relevant detector

This is slower than one-click paraphrasing, but it is much closer to what detectors actually reward.

So should you use QuillBot at all?

Yes, but for the right job.

Use QuillBot when you need:

- cleaner phrasing

- alternative wording

- stylistic variation in a human-written draft

Do not rely on QuillBot alone when you need:

- lower Turnitin AI scores

- stronger GPTZero performance

- safe citation preservation in academic work

- reduced structural AI signal across a full document

It is a useful writing tool. It is just not a complete detector-bypass solution.

Final verdict

QuillBot can help text sound different. That is not the same as making it sound deeply human in the ways detectors measure.

If your main goal is bypassing AI detection, the key problem is not wording. It is structure. That is where paraphrasers usually run out of depth.

If you need a workflow designed around detector-facing signals instead of simple paraphrasing, LegitWrite's AI humanizer free is a better fit because it targets sentence rhythm, burstiness, and paragraph structure rather than just synonyms.

The simplest way to say it is this: QuillBot is an editor. AI detection is a pattern problem. Those are related, but they are not the same problem.