GPTZero vs Turnitin vs Originality.ai: Which Detector Is Most Accurate?

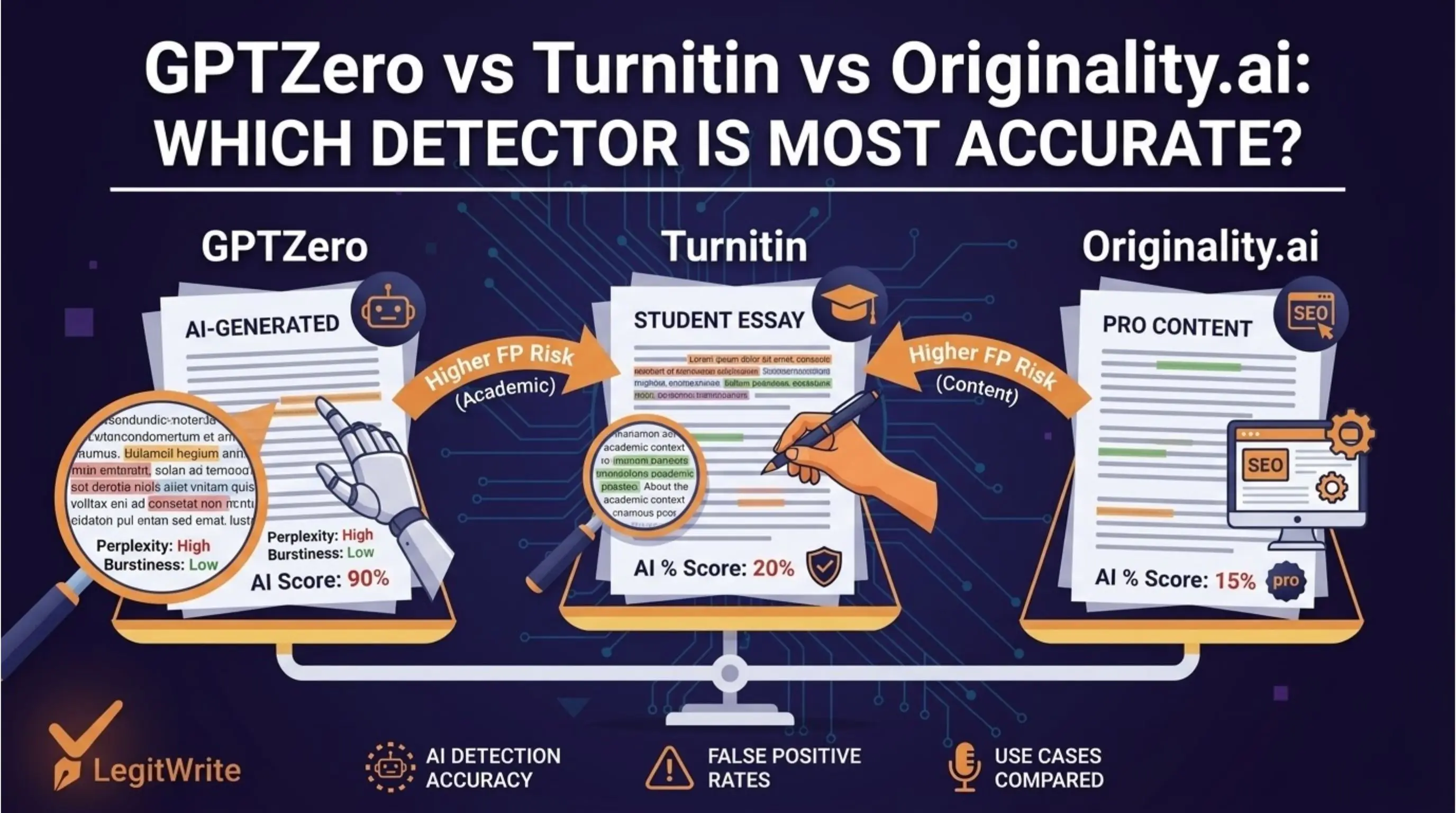

Three tools dominate the AI detection conversation in 2026: GPTZero, Turnitin, and Originality.ai. Each was built for a different context, calibrated differently, and makes different tradeoffs between catching AI content and avoiding false positives.

If you're a student, educator, or content professional trying to understand which detector to trust — or which one you're likely to be evaluated against — this comparison gives you the straight answer.

The Three Detectors at a Glance

GPTZero was built by Princeton student Edward Tian in January 2023 and became the first widely adopted AI detector. It's used primarily in educational settings and is available directly to students and educators. It uses perplexity and burstiness as its primary signals and produces both document-level and sentence-level scores.

Turnitin is the established academic integrity platform that added AI detection to its existing plagiarism detection product in April 2023. It's used by thousands of universities and schools worldwide and is the detector most students are actually evaluated against in formal academic contexts. Its AI detection model was trained specifically on academic writing.

Originality.ai was built specifically for professional content marketing contexts — publishers, content agencies, and SEO teams who need to screen content before publication. It launched in late 2022 and has been continuously updated. It's the most aggressive of the three in terms of detection threshold.

How Each Tool Works

All three tools measure statistical properties of text, but their approaches differ in important ways.

GPTZero focuses on perplexity (how predictable word choices are) and burstiness (how much sentence complexity varies). It runs text through a language model and measures how surprised the model is by each word choice. Low perplexity plus low burstiness equals a high AI probability score. GPTZero produces a percentage score at both the document level and the sentence level, with colour-coded highlighting showing which sentences are driving the score.

Turnitin uses a proprietary model trained specifically on academic writing — both human-written student essays and AI-generated academic content. This specialisation is important: Turnitin's model understands the specific conventions of academic writing and is calibrated to distinguish AI-generated essays from human-written ones in that specific context. It reports a percentage of text estimated as AI-generated, with sentence-level highlighting for instructors.

Originality.ai uses a combination of perplexity measurement, pattern matching against known AI output distributions, and a proprietary neural network trained on professional content. It also includes plagiarism detection alongside AI detection. It is calibrated more aggressively than the other two — it sets a lower threshold for flagging content, accepting a higher false positive rate in exchange for catching more AI content.

Accuracy: What the Research Shows

Direct head-to-head accuracy comparisons are complicated by the fact that each tool performs differently depending on the type of content and the degree of AI modification. Here's what independent testing consistently finds:

On raw, unmodified AI output, all three tools perform well. Detection rates above 90% are common for clean ChatGPT or GPT-4 output across all three platforms. This is the easy case for all detectors.

On lightly paraphrased AI output (synonym replacement, minor reordering), Originality.ai maintains the highest detection rate — its aggressive calibration catches content that GPTZero and Turnitin begin to miss. Detection rates typically fall to 75-85% across all three, with Originality.ai at the higher end.

On structurally rewritten AI output (genuine sentence-level rewriting with varied structure), all three tools show significant accuracy drops. Detection rates fall to 50-65%, with considerable variation between tools and content types. At this level of modification, no tool maintains reliable accuracy.

On genuinely human writing, the false positive rates differ significantly:

- GPTZero: approximately 2-4% on native English academic writing

- Turnitin: approximately 1-3% on native English academic writing (deliberately calibrated low)

- Originality.ai: approximately 5-9% on professional content (higher false positive rate accepted by design)

For non-native English writers, all three tools show significantly elevated false positive rates — ranging from 8% to 15% depending on the writer's proficiency level and writing style.

Calibration Philosophy: The Key Difference

The most important difference between these three tools is not their underlying technology — it's their calibration philosophy.

Turnitin is calibrated conservatively. It is designed to minimise false positives, even at the cost of missing some AI content. The reasoning is that a false accusation of academic misconduct has severe consequences for a student, so the threshold for flagging should be high. Turnitin explicitly states that its scores should be treated as one input into instructor judgement, not as standalone evidence.

GPTZero sits in the middle. It is calibrated for educational use with a reasonable balance between detection sensitivity and false positive avoidance. It is more transparent about its methodology than Turnitin and provides more granular sentence-level data.

Originality.ai is calibrated aggressively. It is designed for publishers and content agencies where the cost of publishing AI content without disclosure is considered higher than the cost of occasionally flagging genuine human writing. It accepts a higher false positive rate as the price of higher detection sensitivity.

This means the same piece of text can receive very different scores from the three tools — not because one is more accurate in an absolute sense, but because they are making different tradeoffs based on their intended use cases.

Which Tool Should You Trust?

The answer depends entirely on your context.

If you're a student being evaluated by your institution, Turnitin is almost certainly the tool you need to understand. It's the most widely deployed in formal academic settings and it's calibrated specifically for academic writing. A score that passes Turnitin's threshold matters more than a score on GPTZero or Originality.ai if Turnitin is what your institution uses.

If you're a content publisher screening submissions, Originality.ai's aggressive calibration makes it the right tool for catching AI content before it reaches your audience. Accept that it will occasionally flag genuine human writing and build a human review step into your workflow.

If you're a writer checking your own work before submission, GPTZero is the most accessible and transparent option — it's free for basic use, shows sentence-level detail, and explains its methodology more openly than the others. Running your work through GPTZero gives you a useful signal even if the final submission will be evaluated by Turnitin.

If you want the most complete picture, run your text through multiple detectors. The same content scoring low on all three gives you considerably more confidence than a low score on just one. LegitWrite's AI Detector runs multiple detection modes — from Fast to Forensic — giving you a layered view of your content's detection risk in a single scan.

Head-to-Head Comparison Table

| GPTZero | Turnitin | Originality.ai | |

|---|---|---|---|

| Primary use case | Education | Academic institutions | Content publishing |

| Detection method | Perplexity + burstiness | Proprietary academic model | Neural network + pattern matching |

| False positive rate (native English) | ~2-4% | ~1-3% | ~5-9% |

| Aggressiveness | Medium | Conservative | High |

| Student access | Yes (free tier) | Via institution only | Paid subscription |

| Sentence-level detail | Yes | Yes (instructors only) | Yes |

| Includes plagiarism detection | No | Yes | Yes |

| Transparent methodology | Yes | Partial | Partial |

| Best for | Self-checking, educational use | Formal academic evaluation | Professional content screening |

The Honest Bottom Line

No single detector is definitively "most accurate" — because accuracy depends on what you're measuring, against what type of content, and what tradeoffs you're willing to accept.

What is clear from the research and from the tools' own documentation:

All three tools reliably catch raw AI output. None of them reliably catches heavily revised AI content. All of them produce false positives at meaningful rates for certain writing profiles. None of them should be used as standalone evidence of AI use in any high-stakes context.

The most useful thing you can do with this information is understand which tool applies to your situation, check your own work against it before it reaches an evaluator, and build a writing process that produces genuinely human output — not because you're trying to game a detector, but because that's what produces writing worth reading.

Muhammad Awais is a writer and blogger covering AI tools, academic integrity, and content authenticity. Follow on Medium.

Want to check your content against multiple detection modes before it reaches an evaluator? Try LegitWrite's free AI Detector — Fast through Forensic modes, no signup required for your first scan.