How to Make AI Text Undetectable in 2026 (Step-by-Step, Tested)

Raw AI output — from ChatGPT, Claude, Gemini, or any other model — is detectable by every serious AI detector in 2026. The question is not whether it gets flagged. It does. The question is what you actually need to change to lower the score reliably.

Not every approach works. Most popular suggestions — synonym replacement, paraphrasing tools, running text through a second AI — leave the underlying fingerprint intact. This guide covers what actually moves the needle, in order of effectiveness.

Why AI Detectors Flag AI Text

AI detectors do not look for specific words or phrases. They measure statistical patterns in the text as a whole:

Perplexity — how predictable each word choice is. Language models generate the most probable next token at each step, which makes AI text highly predictable. Human writers make unexpected, idiosyncratic choices that raise perplexity.

Burstiness — how much sentence length varies within a paragraph. Human writing is bursty: short punchy sentences followed by longer explanatory ones, then another short one. AI writing produces sentences of uniform medium length, paragraph after paragraph.

Structural predictability — how consistently paragraphs follow the same internal pattern: topic sentence, 2–3 supporting points, closing summary. AI almost always does this. Humans often don't.

Transition density — how often predictable transition phrases appear ("Furthermore," "It is important to note," "In conclusion"). These are extremely high-signal for detectors.

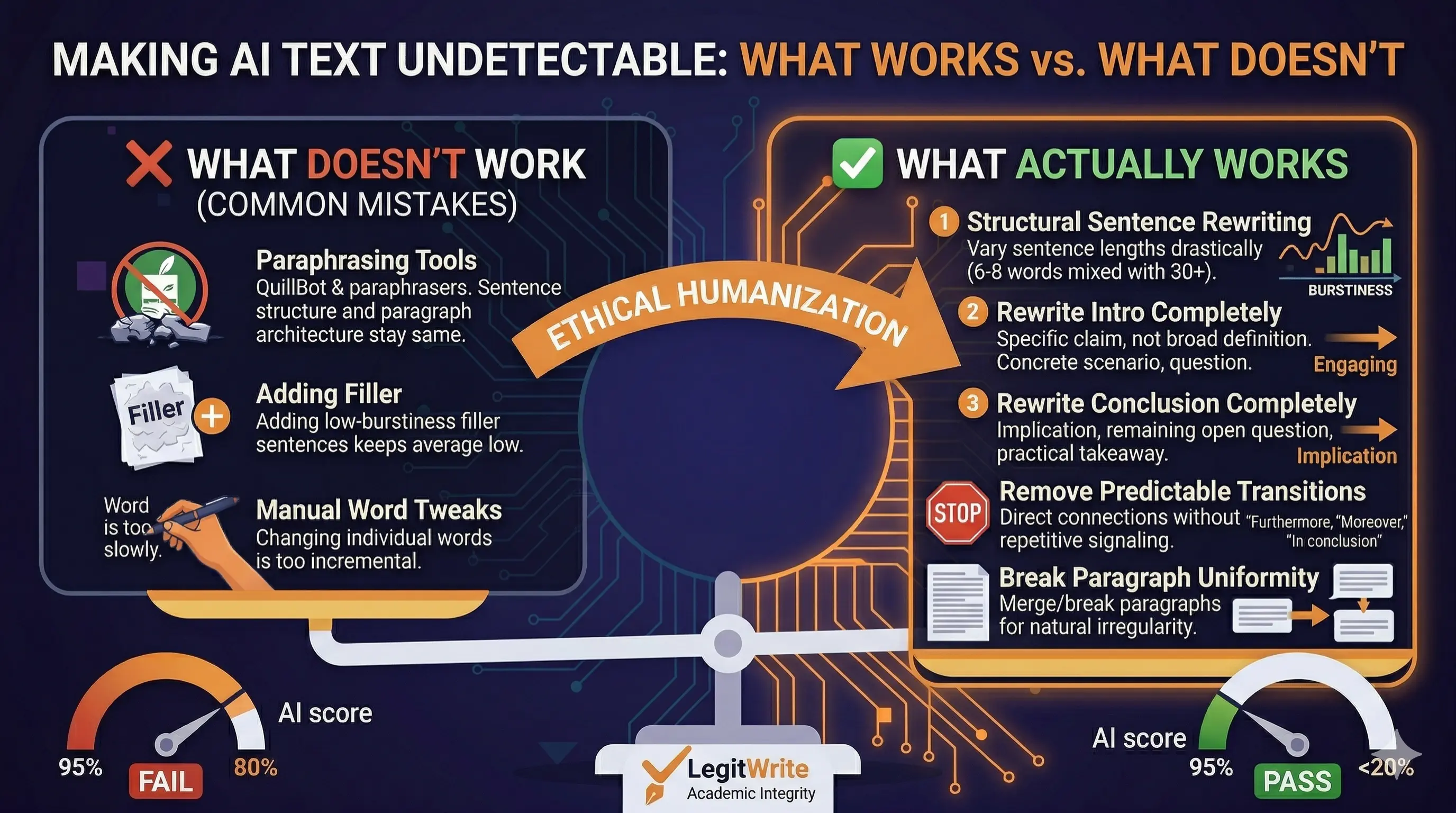

These four patterns are what you need to change. Changes that don't address them — word swaps, light paraphrasing, adding a few sentences — leave the fingerprint largely intact and move the score only slightly.

What Doesn't Work

Synonym replacement and paraphrasing tools

Tools like QuillBot in standard mode replace words with synonyms and occasionally reorder clauses. The sentence structure, paragraph architecture, and transition density stay the same. Detectors measure structure, not vocabulary. Score drop from pure synonym replacement: typically 5–15 percentage points — not enough to cross below a detection threshold.

Running the output through another AI

Asking ChatGPT or Claude to "make this sound more human" produces text with the same underlying statistical profile. Different words, same rhythm, same paragraph shape, same transitions. The detector fingerprints the structure, not the specific phrasing.

Manual word-by-word editing

Changing individual words without changing sentence length or paragraph structure moves the score slightly but rarely enough. You are editing the surface while leaving the skeleton intact.

Adding filler sentences

Inserting a few buffer sentences between AI paragraphs does not meaningfully raise burstiness. The detector averages across the whole document, and low-burstiness sentences surrounded by other low-burstiness sentences keep the average low.

What Actually Works

1. Vary Sentence Length Deliberately (Highest Impact)

This is the single most effective change. Find any three consecutive sentences of similar length and either shorten one drastically — six to eight words — or expand another significantly with a clause or subordinate phrase. Do this across every paragraph, not just one section.

A paragraph that currently reads:

AI detectors look for specific patterns in text. These patterns are statistical in nature. They measure predictability and structure.

Becomes:

AI detectors are not looking for specific words. They measure statistical structure — how predictable your sentence rhythms are, how uniform your paragraph shapes are, how reliably your transitions signal AI origin. That pattern is the problem.

Same content. Different rhythm. The burstiness score rises significantly.

2. Rewrite the Introduction Completely

AI introductions almost always:

- Open with a broad definitional or framing statement

- Define relevant terms

- State what the article will cover

This structure is extremely high-signal. The opening paragraph of most AI-generated documents is where detectors gain the most confidence.

Replace it with:

- A specific claim or tension (not a definition)

- A concrete observation or scenario

- A direct question that frames what follows

This single change often moves the score by 15–25 percentage points on its own.

3. Rewrite the Conclusion Completely

AI conclusions almost always restate the main points that were just made. This is predictable enough that detectors weight it heavily.

Replace a summary conclusion with:

- An implication of what was discussed

- A remaining open question

- A practical takeaway in plain, direct language — no hedges, no "in summary"

4. Remove Predictable Transition Phrases

These are extremely high-signal in AI text. Remove or replace all of them:

- "Furthermore,"

- "It is important to note that"

- "It is worth mentioning"

- "In conclusion,"

- "Additionally,"

- "This highlights the importance of"

- "As mentioned above"

- "On the other hand"

Human writers use these occasionally. AI writers use them constantly. Even removing half of them creates a meaningful shift in the transition density score.

5. Break Paragraph Uniformity

AI paragraphs are typically 3–5 sentences with predictable internal structure. Human writing varies: some paragraphs are one sentence. Some are eight sentences with a different internal logic. Some open with a question or a data point rather than a topic sentence.

Make deliberate structural variations:

- Break one long AI paragraph into two — one of them a single sentence

- Merge two short AI paragraphs into one longer block

- Start a paragraph with a question instead of a statement

The goal is visible irregularity across the document.

Expected Score Drops

Starting from a typical ChatGPT essay at 90–95% AI probability on GPTZero:

| Change | Typical Score Drop |

|---|---|

| Synonym replacement only | 5–15% |

| Introduction rewrite | 15–25% |

| Conclusion rewrite | 10–20% |

| Sentence length variation | 20–35% |

| Remove transition phrases | 5–10% |

| Break paragraph uniformity | 10–15% |

| All structural changes — one pass | 50–75% |

Applying all structural changes in one pass typically brings a 90%+ AI score down to 15–40%. A targeted second pass on the remaining high-signal sections can push it below 15%.

Turnitin is harder to reduce than GPTZero. The same structural changes help, but the scoring model is updated more frequently and is calibrated more conservatively. Getting reliably below Turnitin's threshold usually requires a second pass and some manual editing of the introduction, conclusion, and any remaining high-signal paragraphs.

Using LegitWrite to Automate the Structural Pass

The manual workflow above works but takes 30–60 minutes on a 1,000-word document. LegitWrite automates the structural rewriting pass — sentence length variation, paragraph restructuring, introduction and conclusion rewrites — in seconds.

How to use it:

- Paste your AI-generated text into LegitWrite

- Choose a mode: Fast (quick pass), Moderate (balanced), Strong (deep structural rewrite), or Forensic (maximum depth — paid plans only)

- Select a tone: Formal for academic work, Casual for general content, Auto to let the system match your original

- Run the humanization pass

- Paste the output into GPTZero or Originality.ai to check the score

- Manually edit any sections that still score high, then re-run if needed

What each mode does differently:

- Fast — quick burstiness and transition adjustments; good for content that's already close

- Moderate — sentence rhythm variation and paragraph restructuring; handles most standard AI essays

- Strong — full structural rewrite including introduction/conclusion zones; recommended for academic essays

- Forensic — deepest pass, targets patterns the other modes leave behind; available on Basic, Pro, and Plus plans

Plan options:

| Plan | Price | Daily Requests | Humanizer Input | Modes |

|---|---|---|---|---|

| Free | $0 | 5/day | 6,000 chars | Fast / Moderate / Strong |

| Basic | $4.99/mo | 20/day | 10,000 chars | + Forensic |

| Pro | $8.99/mo | 60/day | 15,000 chars | + Forensic |

| Plus | $13.99/mo | 120/day | 30,000 chars | + Forensic |

First month on any paid plan is 50% off with code Enjoy50, applied automatically at checkout.

Try LegitWrite free — 5 requests per day, no credit card →

Making AI Text Undetectable on Specific Detectors

GPTZero

GPTZero measures perplexity and burstiness across the full document. It's particularly sensitive to uniform sentence length and predictable paragraph structure. Most documents go from 85–95% AI probability to below 30% after one Strong-mode pass in LegitWrite. Below 15% typically requires a second pass targeting the remaining high-signal sections.

Turnitin

Turnitin's AI detection is more conservative than GPTZero and is updated more frequently. It looks at sentence-level predictability, structural markers, and overall document smoothness. The same structural changes help, but getting reliably below Turnitin's threshold often requires two LegitWrite passes plus manual editing — particularly on the introduction and conclusion, which carry the most signal weight.

Originality.ai

Originality.ai is the most aggressive of the three — it flags more borderline cases and tends to maintain higher AI probability scores even after structural rewriting. After a Strong or Forensic pass in LegitWrite, some documents that pass GPTZero still show 40–60% on Originality.ai. A second pass and targeted manual editing of high-signal paragraphs usually resolves this.

The Realistic Ceiling

No method makes AI text 100% undetectable under all conditions:

- Detector models update continuously. What passes today may be flagged in a future version.

- Very long documents (5,000+ words) accumulate AI signals across more paragraphs, requiring more passes.

- Highly constrained text — technical papers with fixed citations, regulatory language, structured reports — has fewer structural options because the content limits what can change.

The practical goal is not "undetectable" — it is "below the threshold that triggers a flag." For most use cases, that means below 30% AI probability on GPTZero and not triggering Turnitin's AI writing detection. With one structural pass using Strong or Forensic mode, most standard-length AI-generated essays get there.

Step-by-Step Workflow Summary

- Run the original text through LegitWrite's AI Detector — note which sections score highest

- Paste the full text into the Humanizer — choose Strong mode, select your tone

- Run the humanization pass

- Test the output in GPTZero — if below 30%, you're likely in the clear for most use cases

- For Turnitin: manually review the introduction, conclusion, and any transitions that survived the pass; edit them directly

- Re-run the Humanizer on sections that still score high — target one or two high-signal paragraphs rather than the whole document again

- Final check: read the output to confirm meaning, citations, and argument are intact