AI Writing Detection in 2026: How Reliable Are AI Detectors Really?

The question sounds simple. The honest answer is complicated.

Yes, AI writing is detectable — sometimes, in some contexts, with some degree of reliability. No, it is not reliably detectable in all cases, and the gap between "sometimes works" and "always works" is large enough to matter enormously depending on who you are and what's at stake.

Here is a straight answer to each version of this question.

Can AI Detectors Identify AI Writing?

Yes — when the writing is unmodified, directly generated output from a major language model, submitted in a context the detector was trained for.

If someone takes a raw ChatGPT response and submits it to Turnitin, GPTZero, or Originality.ai without any modification, current detectors will flag it with high confidence in most cases. The statistical patterns of unmodified AI output — low perplexity, low burstiness, uniform structure, characteristic phrasing — are detectable enough that accuracy rates on clean AI text run above 90% for leading tools.

This is the easy case. It's also not the case most people are actually asking about.

Can Detectors Identify Modified or Humanised AI Writing?

This is where detection becomes genuinely unreliable.

Once AI-generated text has been structurally rewritten — not just paraphrased at the surface level, but rebuilt with varied sentence rhythm, personal voice, specific detail, and genuine structural irregularity — detection accuracy drops significantly. Studies published in 2024 and 2025 found that detection accuracy against heavily revised AI content fell to between 50% and 65% across leading detectors, which is only marginally better than random chance.

The implication is significant: a student or writer who uses AI as a drafting tool and then genuinely rewrites the output in their own voice may produce text that is statistically indistinguishable from human writing. Not because they gamed the system, but because the rewriting process itself introduces the human variation that detectors are looking for.

Has Detection Improved in 2026?

Yes, meaningfully — but so has AI writing.

The 2025 and 2026 generations of AI models produce more varied, less formulaic output than GPT-3 era text. Models are increasingly being fine-tuned to produce writing with higher perplexity and more natural burstiness. Some are explicitly trained to reduce their detectability.

Meanwhile, detectors have added more sophisticated signals — semantic coherence analysis, document-level pattern matching, comparison against known AI output distributions — that catch more sophisticated AI use than earlier tools could.

The result is an ongoing arms race where neither side has achieved a decisive advantage. Detection is better than it was in 2023. It is not reliable enough to be treated as definitive evidence of AI use in any serious context.

What Do the Major Detectors Say About Their Own Accuracy?

This is revealing.

Turnitin publicly states that its AI detection should be used as one signal among many and explicitly warns against using a high score as standalone evidence of academic misconduct. Its own documentation recommends instructor judgement alongside automated scoring.

GPTZero reports accuracy rates above 99% on its benchmark tests — but those benchmarks use clean, unmodified AI output. Real-world accuracy against mixed or revised content is significantly lower.

Originality.ai claims industry-leading accuracy for professional content marketing contexts but acknowledges false positive rates that make it unsuitable as a sole arbiter of authorship.

Every major detector includes some version of the same caveat: automated scores are indicators, not verdicts. This isn't false modesty — it's an accurate reflection of what probabilistic detection can and cannot do.

Can a Human Tell If Writing Is AI-Generated?

Often, yes — but not reliably, and not always for the right reasons.

Experienced readers — editors, professors, content directors — can often identify AI-generated writing by feel. The giveaways are usually qualitative rather than statistical: a kind of frictionless fluency, arguments that flow perfectly but say nothing surprising, examples that are generic rather than specific, a complete absence of the rough edges that reflect genuine thinking.

But this human detection is also unreliable and subject to bias. Studies have shown that human reviewers flag non-native English writing as AI-generated at higher rates than native writing, regardless of whether AI was used. Highly polished human writing is sometimes perceived as AI-generated. Writing that is deliberately imperfect is sometimes perceived as human even when it isn't.

Human detection, like automated detection, is a probabilistic judgement — not a definitive answer.

What About Watermarking?

Watermarking is the most promising long-term solution to AI detection, and it's worth understanding why it hasn't solved the problem yet.

Some AI providers — most notably Google with its SynthID technology — are embedding invisible statistical watermarks into AI-generated text. These watermarks work by subtly biasing word choice patterns in ways that are imperceptible to readers but detectable by a corresponding verification tool.

The problem is that watermarking is opt-in by providers, trivially removable by anyone who knows it's there, and only works if the text reaches a verifier that has access to the original watermarking key. A student submitting watermarked AI text to Turnitin gets no benefit from the watermark because Turnitin doesn't have the key.

Watermarking may eventually become a standard part of AI content infrastructure — but in 2026, it is not a reliable detection mechanism for most real-world contexts.

The Honest Summary

| Question | Honest answer |

|---|---|

| Can detectors catch raw AI output? | Yes, with high reliability |

| Can detectors catch revised AI output? | Sometimes — accuracy drops significantly with genuine rewriting |

| Is detection reliable enough to prove AI use? | No — every major detector explicitly says so |

| Can humans reliably detect AI writing? | Often but not always, and subject to significant bias |

| Will detection improve? | Yes, but AI writing will improve alongside it |

| Is there a foolproof detection method? | Not in 2026 |

What This Means in Practice

If you're a student worried about false positives — genuine human writing being flagged as AI — the risk is real but manageable. Write with specific detail, varied sentence structure, and genuine personal voice. Check your work with a detector before submitting. Understand that a high score is a flag for review, not a verdict.

If you're a content publisher trying to screen for AI content — the tools available will catch obvious, unmodified AI output reliably. They will not catch sophisticated AI-assisted writing with any consistency. Your editorial process needs human judgement alongside automated scoring.

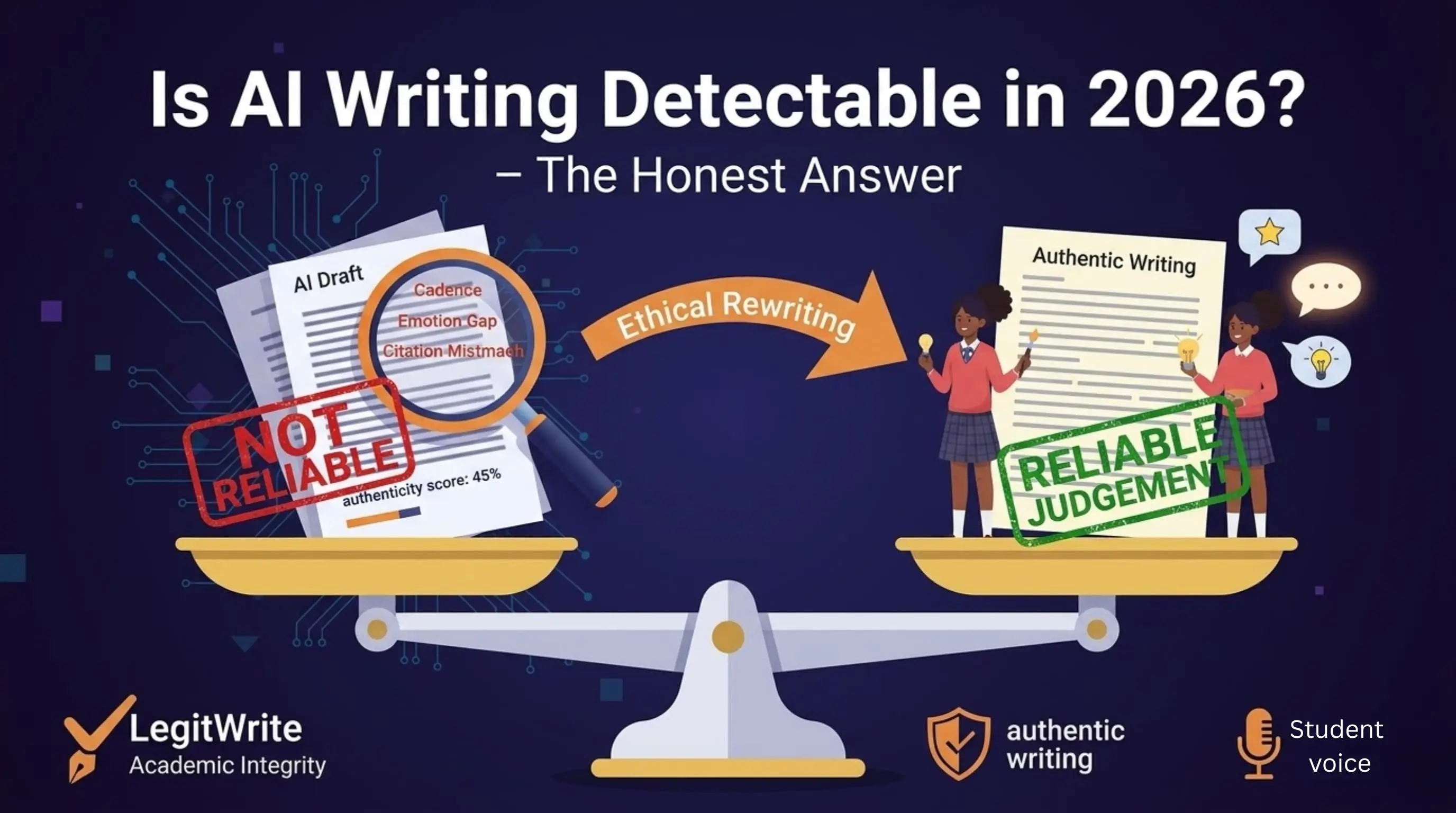

If you're using AI as a writing tool and want to ensure your final output reads as genuinely human — the answer is not to outsmart the detector. It's to actually rewrite the content in your own voice, with your own specific knowledge, until the writing reflects your genuine thinking. At that point, whether a detector flags it becomes almost irrelevant, because the work is authentically yours.

Tools like LegitWrite's AI Humanizer are built for exactly this — not to trick detectors, but to help writers identify where AI patterns persist in their drafts and revise toward genuine human expression.

The goal was never to be undetectable. The goal was always to write something worth reading.

Muhammad Awais is a writer and blogger covering AI tools, detection technology, and content authenticity. Follow on Medium.

Wondering how your writing scores right now? Try LegitWrite's free AI Detector — instant results, no signup required for your first scan.