What Is the 30% Rule for AI? (The Turnitin Threshold Explained)

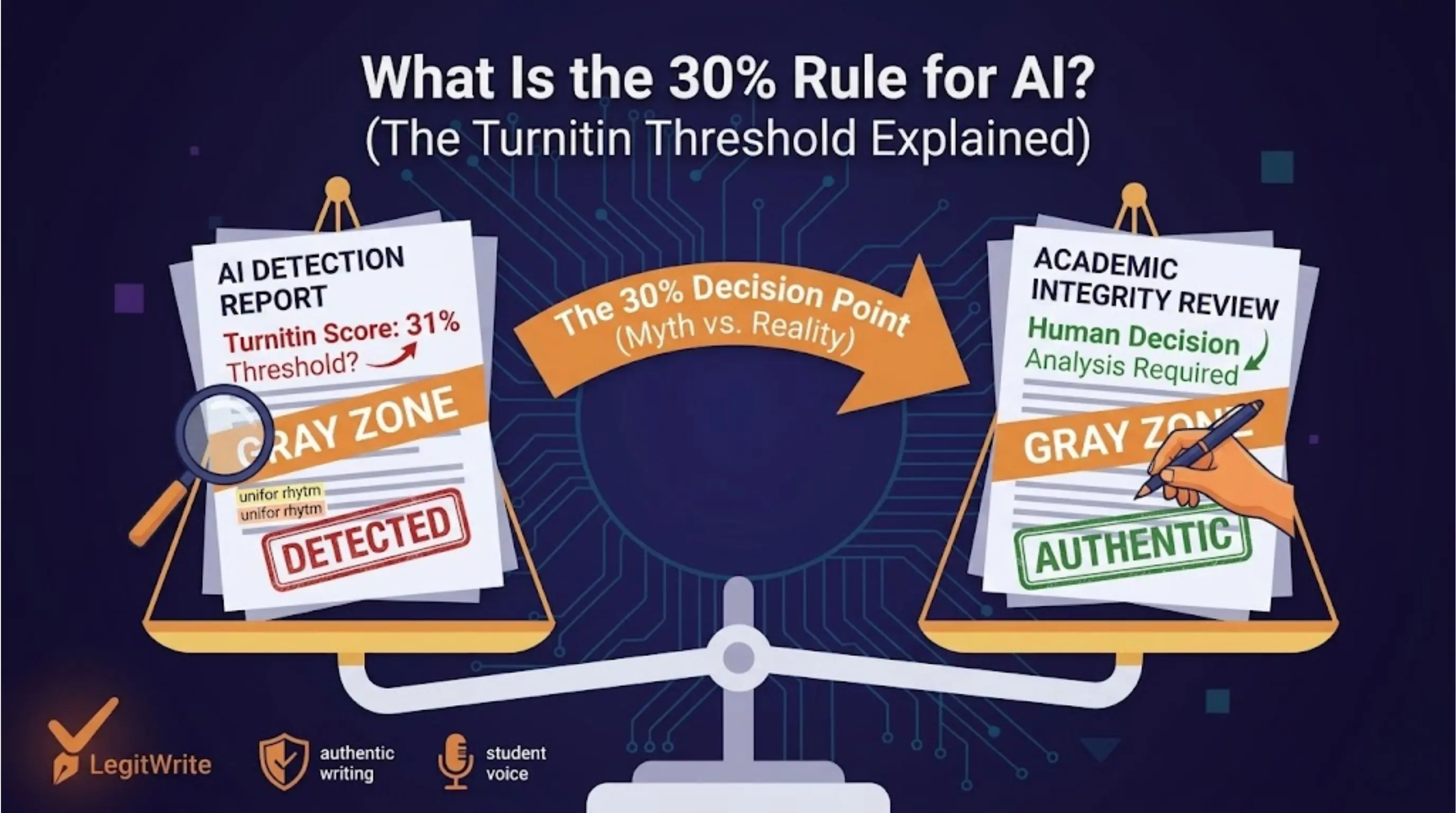

Students keep hearing the same claim: if your Turnitin AI score is under 30%, you are safe. If it is over 30%, you are in trouble. That idea spreads fast because it sounds simple, measurable, and practical.

The problem is that the "30% rule" is not an official Turnitin rule. It is a shorthand some instructors, schools, and student forums use when they are trying to interpret a probabilistic AI score. Sometimes it reflects a local habit. Sometimes it is just a rumor repeated so often that it starts sounding like policy.

If you are trying to understand what 30% actually means, the right question is not "is 30% the real threshold?" The right questions are: what is Turnitin measuring, how do institutions interpret the score, and what should you do if your report lands around that range?

Quick answer: there is no universal 30% Turnitin rule

Here is the short version.

| Claim | Reality |

|---|---|

| Turnitin has an official 30% AI pass/fail threshold | No. Turnitin reports a score. Institutions decide how to interpret it. |

| Under 30% always means safe | No. Some instructors will still review the work manually. |

| Over 30% always means guilt | No. The score is not proof. It is a signal that invites review. |

| 30% is meaningless | Also no. It can still matter if a department or instructor uses it informally. |

So the 30% figure is best understood as a decision point some humans use, not a hard-coded law of the software.

Where the 30% rule comes from

The 30% idea usually comes from one of three places.

First, some instructors want a simple internal guideline. They may not want to investigate every paper with a 6% or 12% AI score because they know AI detectors produce ambiguity. In practice, they start paying closer attention once a paper crosses a certain band such as 20%, 25%, or 30%.

Second, students often confuse institutional practice with platform policy. If one professor says "I review anything over 30%," that quickly becomes "Turnitin flags anything over 30%." Those are not the same statement.

Third, the number persists because it feels intuitive. A score under 10% seems low. A score over 80% seems extreme. A score around 30% feels like the middle zone where suspicion begins. That makes it easy for online communities to turn it into a mythic threshold.

None of that means the number is fake. It means the number is contextual.

What Turnitin's AI score actually represents

Turnitin's AI detector does not read your essay the way a professor reads argument, evidence, or insight. It looks at the statistical properties of the writing and estimates how much of the submitted text resembles machine-generated output.

That means the score is not a declaration of fact. It is not saying, "30% of this essay was definitely written by AI." It is saying, "A portion of this writing resembles the kinds of patterns our model associates with AI generation."

Those patterns include:

- predictable word sequencing

- highly regular sentence length

- overly even paragraph rhythm

- generic transition language

- low structural variation across the document

This matters because a score near 30% often sits in the most interpretable zone: not clearly clean, not clearly overwhelming, and therefore highly dependent on human judgment.

Why 30% can still trigger review even without an official rule

Suppose an instructor sees 2%, 8%, and 14% on different assignments all week. Then a paper comes in at 31%. Even if there is no formal threshold, that number may feel different enough to justify a closer look.

That review might include:

- reading the highlighted sections carefully

- comparing the style with the student's earlier work

- checking whether the introduction and conclusion sound unusually polished

- asking the student about their drafting process

- reviewing whether citations, claims, and phrasing align with the student's typical voice

So yes, 30% can matter in practice. But it matters because people use it as a review trigger, not because Turnitin itself enforces an automatic penalty there.

What kinds of papers often land around 20% to 40%

This is the range where many borderline cases live. A paper can land there for very different reasons:

1. Lightly edited AI output

This is the classic case. A student generates a draft in ChatGPT, changes a few sentences, swaps a few words, and submits it. The vocabulary changes, but the underlying structure still looks machine-made.

2. Formulaic academic prose

Some genuinely human writing is very predictable. This is especially common in:

- five-paragraph essays

- ESL writing with simple but correct structure

- highly templated reflection papers

- introductory discussion posts

The more standardized the prose, the easier it is for statistical models to confuse it with AI-like writing.

3. Mixed-origin documents

Sometimes a student writes most of the paper themselves but uses AI for:

- the introduction

- topic sentences

- a summary paragraph

- a conclusion rewrite

Those sections can disproportionately influence the score because they are often the most detector-sensitive parts of the paper.

What students should do if their score is around 30%

Panic is usually the worst move. A 30% range score is precisely where you need a calm, evidence-based response.

Review the high-risk sections first

Do not treat the paper as one big problem. Review:

- the introduction

- the conclusion

- any perfectly smooth transition-heavy paragraphs

- any paragraph you know began as AI output

These are often the sections carrying the strongest signal.

Look for structural uniformity

Ask practical questions:

- are all my sentences roughly the same length?

- do all paragraphs follow the same shape?

- do transitions sound too polished and generic?

- does the paper sound more fluent than the way I naturally write?

If the answer is yes, the issue is usually structure rather than vocabulary.

Preserve the argument, rewrite the rhythm

Students often make the mistake of chasing the score with random synonym swapping. That rarely solves the real problem. A better approach is to keep the same thesis, citations, and evidence while changing:

- sentence variety

- paragraph rhythm

- transition patterns

- emphasis and pacing

That is why structural rewriting works better than paraphrasing tools.

What instructors should understand about the 30% myth

The 30% rule is also risky on the faculty side. If an instructor treats 30% as if it were a formal proof standard, they may overstate what the detector is capable of.

The healthier interpretation is:

- low scores are not always clean

- medium scores are not always guilt

- high scores are still signals, not verdicts

The score should be one input into a broader review process. That process should include writing quality, student history, assignment type, and the possibility of false positives.

This is especially important because a rigid threshold can create two bad outcomes at once:

- students who used AI heavily but edited enough to drop below the threshold feel falsely safe

- students who wrote honestly but in a predictable style get pulled into unnecessary suspicion

So what is the best way to think about 30%?

Think of it as a gray-zone threshold, not a legal threshold.

At around 30%, many reviewers start asking more questions. That does not mean the detector has reached certainty. It means the document now sits in a zone where interpretation matters more than raw numbers.

If you are a student, your goal should not be "stay under 30% at all costs." Your goal should be to submit writing that genuinely reflects human structure, your own logic, and your own control over the argument.

If you are an educator, your goal should not be to replace judgment with a percentage. It should be to use the percentage as one clue among several.

What to do next

If you are revising a paper that feels risky under Turnitin, focus on the parts the detector is most likely to score aggressively: introductions, conclusions, topic sentences, and overly uniform paragraphs.

For a practical workflow, start with a detector-aware rewrite rather than a synonym tool. LegitWrite's Bypass Turnitin AI Detection page explains how structural rewriting reduces the AI fingerprint while keeping your citations, claims, and argument intact.

The useful takeaway is simple: there is no magical 30% safety line. There is only writing that still carries a strong machine signature, and writing that has been revised enough to read like a real human draft.