Originality.ai vs GPTZero vs Turnitin: Which Should You Use?

If you search for the "best" AI detector, you usually see the same three names repeated: Originality.ai, GPTZero, and Turnitin. But the comparison is often framed too loosely, as if they all serve the same user and solve the same problem in the same way.

They do not.

Each platform was built for a different environment, with a different operational goal, and that changes how you should evaluate it. A publisher auditing freelance content does not have the same requirements as a university handling student submissions. A teacher reviewing one essay does not need the same workflow as a content agency scanning hundreds of articles every week.

The useful question is not "Which detector is strongest in the abstract?" It is "Which detector fits the decision you actually need to make?" Once you look at the tools through that lens, the differences become much clearer.

The short answer

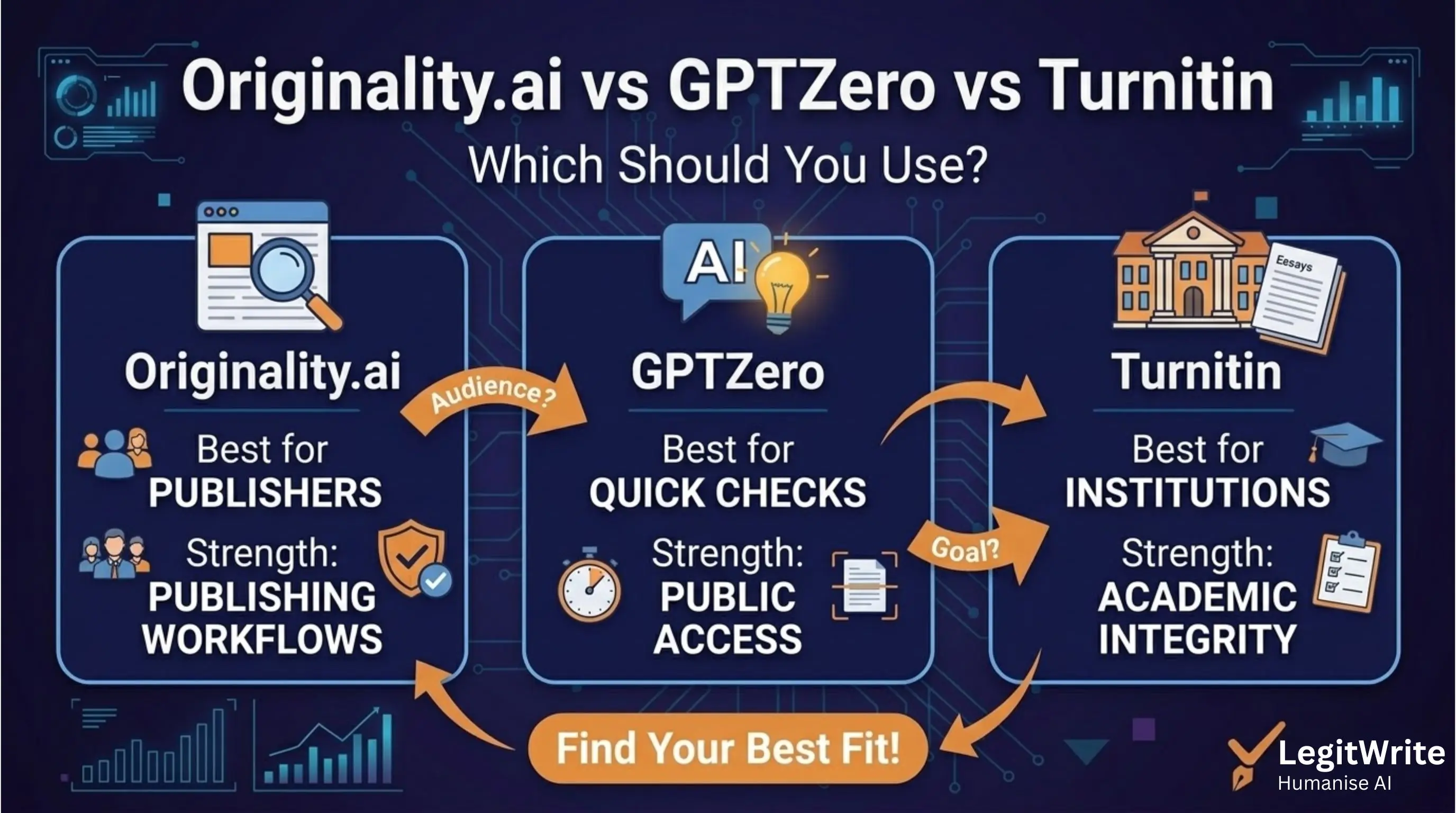

Here is the fastest way to understand the three tools:

| Tool | Best for | Strength | Weakness |

|---|---|---|---|

| Originality.ai | publishers, SEO teams, agencies | strong workflow for web content auditing | less aligned with classroom review norms |

| GPTZero | educators, quick checks, public-facing analysis | easy access and sentence-level explanations | more noise and more debate around false positives |

| Turnitin | universities and institutional submission systems | built into academic workflows and integrity processes | not available as a casual public detector for most users |

That means the "winner" depends entirely on your use case.

Why these three detectors get compared so often

These platforms dominate discussion because together they cover the three most visible AI detection contexts:

- academic institutions

- independent educators and students

- web publishers and content teams

Turnitin owns the institutional academic conversation. GPTZero owns a lot of the public conversation because it is easy to access and widely discussed. Originality.ai owns much of the SEO and agency conversation because it bundles AI detection with plagiarism and editorial scanning workflows.

So when people compare them, they are often really comparing three different ecosystems.

Turnitin is an institutional decision tool first

Turnitin matters because it is embedded inside the environments where the consequences are highest. Many students do not choose whether they interact with Turnitin. Their school chooses it for them.

That matters because Turnitin is not just a scoring interface. It is part of a broader academic integrity process that includes:

- submission workflows

- instructor dashboards

- similarity reporting

- institutional review procedures

This makes Turnitin less of a standalone detector and more of an academic compliance layer.

From a practical standpoint, Turnitin is strongest when the institution wants a detector tightly connected to coursework review. It is weaker as a general-purpose commercial tool for people outside that ecosystem.

GPTZero is easiest to access, but easiest to over-interpret

GPTZero became highly visible because it presented AI detection in a way that felt immediate and understandable. It popularized concepts like perplexity and burstiness in the public conversation and gave teachers, editors, and curious users a tool they could test quickly.

That accessibility is a real strength. GPTZero helps people understand why a detector might classify text as AI-like, and its sentence-level framing can make the output feel intuitive.

But that same accessibility also encourages misuse. Because GPTZero is easy to try, people often treat it as a definitive answer rather than a probabilistic signal. It becomes the first tool someone uses and, too often, the last word they hear.

In practice, GPTZero is best for:

- quick document checks

- rough diagnostic review

- classroom conversations about AI-like writing patterns

- content teams that want a fast secondary detector

It is less ideal when a high-stakes institutional workflow needs more process around the result.

Originality.ai is optimized for publishers, not classrooms

Originality.ai makes the most sense when the user is not trying to judge a student, but to audit content at scale.

That changes everything about the product philosophy. Publishers and agencies often care about:

- reviewing many documents quickly

- combining AI detection and plagiarism checks

- team reporting

- quality control before publication

Originality.ai fits that context well because it is built as an auditing tool rather than a classroom intervention system. It is especially relevant when content teams want a consistent pre-publish review layer across freelancers, contractors, or AI-assisted editorial workflows.

So if your world is blog publishing, SEO, or client delivery, Originality.ai often feels more native than Turnitin or GPTZero.

They do not score risk in exactly the same way

One reason cross-tool comparisons get messy is that the tools do not present their outputs with the same framing.

Turnitin tends to sit inside institutional workflows where the score is just one part of a wider review process. GPTZero leans heavily into probability and highlighted passages. Originality.ai positions itself as a content audit signal for professional teams.

That means the same text can feel very different depending on where you test it:

- one tool may highlight specific sentences

- another may show an overall percentage

- another may surface the result alongside plagiarism or workflow data

So a score is never just a score. It is also a product decision about how users are meant to interpret that score.

Which one is most accurate?

This is the question everyone asks, but it is usually framed too simply.

Accuracy depends on:

- document length

- genre

- formality

- whether the text has been edited

- whether the writing is academic, marketing, technical, or conversational

There is no universal "most accurate detector" across all contexts. What there is instead is a best-fit detector for a particular kind of text and workflow.

If you are reviewing academic submissions inside a university process, Turnitin often makes the most operational sense.

If you are reviewing web content at scale before publication, Originality.ai often makes the most operational sense.

If you need quick public-facing diagnostic checks or sentence-level explanations, GPTZero often feels the most accessible.

That is a very different answer from saying one tool is objectively superior everywhere.

False positives matter differently in each environment

False positives are not just a technical inconvenience. They matter differently depending on what happens after the detector score appears.

In Turnitin

A false positive can escalate into an academic integrity concern. That makes interpretation discipline extremely important.

In GPTZero

A false positive can create panic quickly because the tool is easy to access and the highlighted output feels persuasive, even when the result should be treated cautiously.

In Originality.ai

A false positive may lead to editorial friction, revision loops, or rejection of a piece before publication.

So the same technical issue produces different downstream consequences depending on the detector's environment.

Which tool should students care about most?

Students should care most about the detector their institution actually uses.

That usually means Turnitin first. If a university routes assignments through Turnitin, then the public debate about GPTZero or Originality.ai matters less than the submission reality in front of you.

That said, GPTZero and Originality.ai still matter for students because they reveal broader detector logic:

- sentence predictability

- structural regularity

- low stylistic variation

- formulaic intros and conclusions

Even if the score itself is from a different platform, the writing patterns that trigger concern overlap substantially across detectors.

Which tool should publishers and agencies care about most?

For publishers, agencies, and editorial teams, Originality.ai is usually the first detector to evaluate because it fits operational publishing needs best.

That does not mean it should be the only one. In fact, many serious teams benefit from running content through more than one lens:

- Originality.ai for workflow auditing

- a secondary detector for spot checks

- human editorial review for meaning, voice, and brand fit

The key point is that publishing teams are solving a content governance problem, not an academic integrity problem. That makes Originality.ai feel more natural in that stack.

What this means if you are trying to reduce detector risk

A lot of people compare detectors because they want to know which one to test against before publication or submission. But the deeper issue is this: detector outputs differ, yet the underlying patterns they react to overlap.

Those patterns include:

- uniform sentence rhythm

- very predictable phrase transitions

- low structural entropy across paragraphs

- overly clean, generic summary language

That is why trying to "beat" only one detector is usually the wrong mindset. If your writing remains structurally machine-like, switching detectors does not solve much. It just changes the label on the warning.

A smarter workflow than detector shopping

Instead of asking which single detector matters most, it helps to build a workflow:

- identify the review environment you care about

- understand the patterns that detector is likely to react to

- revise for natural variation and human structure

- validate against the relevant tool

- keep meaning, evidence, and citations stable

This is more useful than running the same text through five detectors and hoping one score looks nicer than the others.

So which should you use?

Use Turnitin if you are operating inside higher education and need to evaluate student work in an institutional process.

Use GPTZero if you need accessible, quick public checks and sentence-level interpretability.

Use Originality.ai if you publish or manage content at scale and need workflow-aware auditing.

And if your real problem is not detecting AI-like structure but reducing it while preserving meaning, then the more relevant question is what to do after the detector flags the text.

That is where detection and humanization become different stages of the same workflow.

Final takeaway

Originality.ai, GPTZero, and Turnitin are not interchangeable. They overlap in purpose, but they are strongest in different environments and should be judged by different criteria.

Turnitin is the institutional academic standard. GPTZero is the most accessible public-facing explainer. Originality.ai is the most workflow-native choice for publishers and agencies.

If you are trying to understand what happens after a detector raises concern, LegitWrite's Originality.ai vs LegitWrite comparison is the next useful step because it clarifies the difference between detecting AI-like structure and actually rewriting it so the text reads naturally again.